Delving into how to find degrees of freedom, this introduction immerses readers in a unique and compelling narrative, with a focus on the historical context of degrees of freedom and its relation to statistical inference. The concept of degrees of freedom has been a cornerstone in statistical analysis, dating back to the early developments in statistics, where it was first used by Sir Ronald Fisher to determine the accuracy and relevance of statistical models and methods.

The importance of degrees of freedom cannot be overstated, as it significantly impacts the accuracy and relevance of statistical models and methods. In this guide, we will delve into the concept of degrees of freedom, its application in different statistical distributions, regression analysis, and experimental design, and how it can be used to resolve degeneracies in estimation.

Understanding the Concept of Degrees of Freedom in Statistical Inference

Degrees of freedom, a fundamental concept in statistical inference, has a rich and intriguing history that spans centuries. The term itself was first coined by the Irish mathematician and physicist William Thomson (also known as Lord Kelvin) in 1862. At that time, Thomson was working on a problem related to the motion of molecules in gases, where he needed to calculate the number of independent parameters that characterized the system. This problem led him to use the term “degrees of freedom” to describe the number of independent components in a system. Since then, the concept has been widely adopted in statistical analysis, physics, and engineering.

In the early days of statistics, the concept of degrees of freedom was largely theoretical and not widely used in practical applications. However, with the development of statistical methods such as the chi-squared distribution and the F-test, the importance of degrees of freedom became increasingly apparent. In the 1920s and 1930s, statisticians such as Ronald Fisher and Harold Hotelling began to use degrees of freedom to calculate the reliability of statistical inferences, which marked the beginning of a new era in statistical analysis.

The concept of degrees of freedom has far-reaching implications for statistical inference, impacting the accuracy and relevance of statistical models and methods. By understanding the number of degrees of freedom, statisticians can calculate the uncertainty associated with a statistical estimate, which is essential for making informed decisions. In this section, we will explore the impact of degrees of freedom on statistical analysis.

Impact of Degrees of Freedom on Statistical Models and Methods

Degrees of freedom have a profound impact on statistical models and methods, affecting the accuracy and reliability of statistical estimates. When a statistical model has a high number of degrees of freedom, it can capture complex patterns and relationships in the data, but at the cost of increased uncertainty. Conversely, a model with a low number of degrees of freedom may be overly simplistic and fail to capture important relationships in the data, leading to inaccurate estimates.

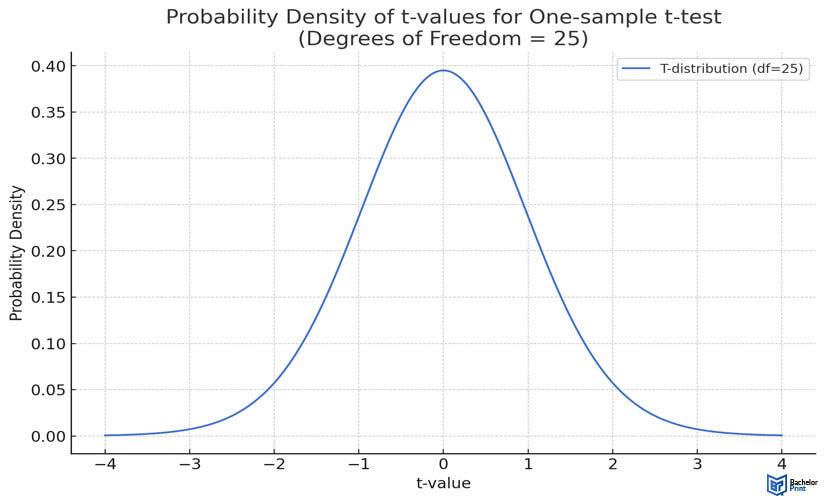

One of the key consequences of degrees of freedom is the concept of “p-value,” which is used to determine the significance of a statistical result. The p-value is a measure of the probability that a observed result or greater could have occurred by chance, given a particular statistical model. The number of degrees of freedom used to calculate the p-value is critical, as it affects the sensitivity and specificity of the test. A high number of degrees of freedom can lead to a more conservative test, while a low number of degrees of freedom may result in a less sensitive test.

Scenarios Where Degrees of Freedom Significantly Affects Statistical Analysis

The number of degrees of freedom significantly affects statistical analysis in various scenarios, including hypothesis testing and confidence intervals. When conducting a hypothesis test, the degrees of freedom are used to calculate the test statistic and determine the p-value. In contrast, when constructing a confidence interval, the degrees of freedom are used to determine the width of the interval.

For example, in the field of medicine, researchers often conduct hypothesis tests to determine the efficacy of a new treatment. The degrees of freedom are used to calculate the test statistic and determine the p-value, which is then used to determine whether the treatment is effective.

Similarly, in finance, analysts use statistical models to estimate the value of a portfolio. The degrees of freedom are used to determine the accuracy of the model, which is essential for making informed investment decisions.

Real-World Applications

The concept of degrees of freedom has numerous real-world applications across various industries, including medicine, finance, and engineering. In the field of medicine, degrees of freedom are used to determine the efficacy of new treatments, while in finance, they are used to estimate the value of a portfolio. In engineering, degrees of freedom are used to design and optimize complex systems.

The concept of degrees of freedom has come a long way since its inception, and its significance is widely recognized in the statistical community. By understanding the impact of degrees of freedom on statistical models and methods, researchers can make more informed decisions and develop more accurate statistical estimates.

Examples of Scenarios Where Degrees of Freedom is Critical, How to find degrees of freedom

There are several scenarios where degrees of freedom is critical, including hypothesis testing, confidence intervals, and regression analysis. For example, in hypothesis testing, the degrees of freedom are used to calculate the test statistic and determine the p-value, which is then used to determine whether the null hypothesis can be rejected. Similarly, in confidence intervals, the degrees of freedom are used to determine the width of the interval, which is critical for making accurate predictions.

Real-World Examples

The concept of degrees of freedom has numerous real-world applications, including hypothesis testing, confidence intervals, and regression analysis. For example, researchers in the field of medicine use degrees of freedom to determine the efficacy of new treatments, while analysts in finance use degrees of freedom to estimate the value of a portfolio. In engineering, degrees of freedom are used to design and optimize complex systems.

Resolving Degeneracies in Estimation Using Degrees of Freedom: How To Find Degrees Of Freedom

Degeneracies in estimation refer to situations where the data is too consistent or too well-behaved, leading to overfitting or a failure to capture the underlying patterns in the data. This can result in poor performance on unseen data, making it essential to identify and address degeneracies in estimation. The concept of degrees of freedom can be used to resolve degeneracies by providing a measure of the number of independent pieces of information in a dataset.

Detecting Degeneracies in Estimation

Degeneracies in estimation can be detected using various statistical methods and techniques. Some common approaches include:

- Visual inspection: Plotting the data and observing any patterns or relationships can help identify degeneracies.

- Correlation analysis: Calculating correlation coefficients between variables can indicate the presence of degeneracies.

- Information criteria: Metrics such as the Akaike information criterion (AIC) and the Bayesian information criterion (BIC) can help identify models that are too complex or overfit the data.

- Residual analysis: Examining the residuals of a model can help identify patterns or relationships that may indicate degeneracies.

These methods can help identify potential degeneracies in estimation, allowing us to take steps to address them.

Addressing Degeneracies in Estimation

Once degeneracies are detected, there are several approaches to address them. One common method is to use regularization techniques, which add a penalty term to the loss function to discourage large weights or complex models. This can help reduce overfitting and improve generalization.

Another approach is to use dimensionality reduction techniques, such as principal component analysis (PCA) or t-distributed stochastic neighbor embedding (t-SNE), to reduce the number of features in the dataset. This can help identify the underlying patterns and relationships in the data, making it easier to develop a robust model.

Applying Degrees of Freedom to Resolve Degeneracies

Degrees of freedom can be used to resolve degeneracies in estimation by providing a measure of the number of independent pieces of information in a dataset. By adjusting the degrees of freedom, we can control the complexity of the model and improve generalization. For example:

- Reducing the degrees of freedom can help reduce overfitting and improve model generalization.

- Increasing the degrees of freedom can help capture more complex patterns and relationships in the data.

In machine learning, degrees of freedom can be used to select the optimal number of hidden layers or units in a neural network. In computer vision, degrees of freedom can be used to select the optimal number of features or dimensions in a feature extraction algorithm.

Example: Using Degrees of Freedom in Machine Learning

Suppose we have a dataset with 1000 samples and 20 features, and we want to develop a neural network with one hidden layer. We can use the degrees of freedom to select the optimal number of hidden units. If we set the degrees of freedom to 10, we would select a model with 10 hidden units. By adjusting the degrees of freedom, we can control the complexity of the model and improve generalization.

Example: Using Degrees of Freedom in Computer Vision

Suppose we have a dataset with 1000 images and we want to develop a feature extraction algorithm to select the optimal number of features. We can use the degrees of freedom to select the optimal number of features. If we set the degrees of freedom to 5, we would select a model with 5 features. By adjusting the degrees of freedom, we can control the complexity of the model and improve generalization.

Outcome Summary

In conclusion, understanding how to find degrees of freedom is crucial in statistical inference, and this guide has provided a comprehensive overview of its application in various contexts. By applying the concepts and techniques discussed in this guide, readers will be able to confidently determine the degrees of freedom in different statistical distributions, regression analysis, and experimental design, and use it to resolve degeneracies in estimation.

Clarifying Questions

What is the concept of degrees of freedom in statistical inference?

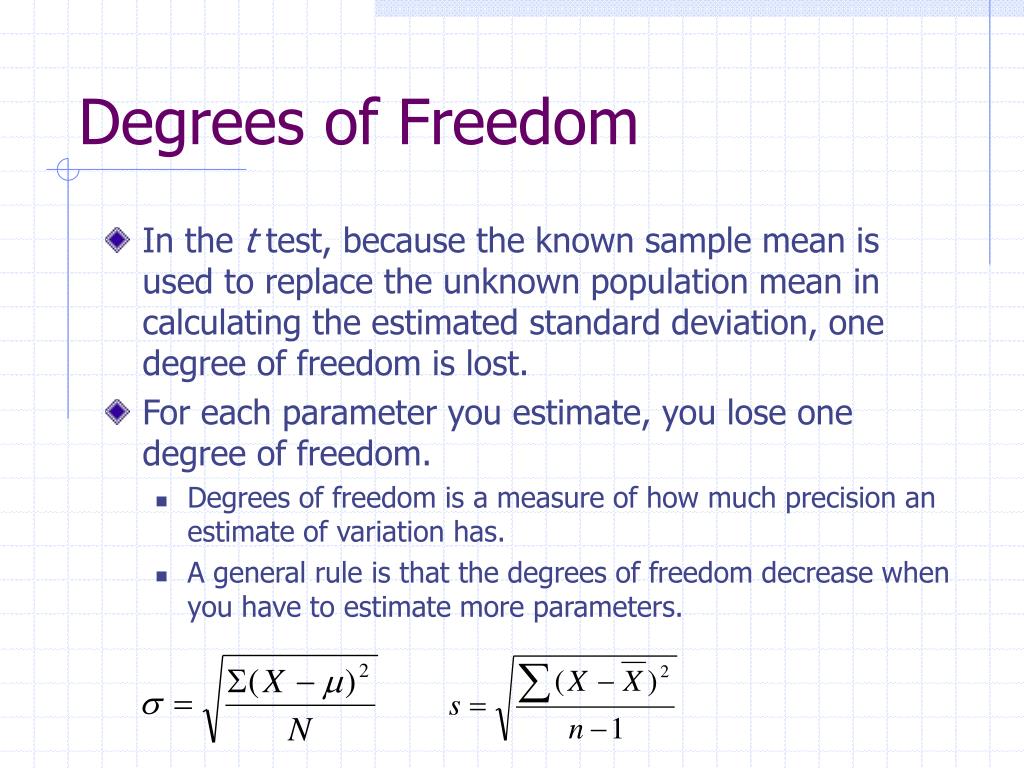

The concept of degrees of freedom refers to the number of independent pieces of information that are used to estimate a parameter or calculate a statistic.

How does degrees of freedom impact the accuracy and relevance of statistical models and methods?

Degrees of freedom significantly impacts the accuracy and relevance of statistical models and methods, as it determines the number of independent pieces of information that are used to estimate a parameter or calculate a statistic.

What is the difference between degrees of freedom and sample size?

Degrees of freedom and sample size are related but distinct concepts. Sample size refers to the total number of observations in a dataset, while degrees of freedom refers to the number of independent pieces of information that are used to estimate a parameter or calculate a statistic.

How can degrees of freedom be used to resolve degeneracies in estimation?

Degrees of freedom can be used to resolve degeneracies in estimation by reducing the number of independent pieces of information that are used to estimate a parameter or calculate a statistic. This can help to alleviate overfitting and improve the accuracy and relevance of statistical models and methods.