Delving into how to find the inverse of a matrix, this introduction immerses readers in a unique and compelling narrative, with a focus on the importance of linear algebra and its applications in scientific computing and data analysis. The inverse of a matrix is a concept that plays a crucial role in solving systems of linear equations and finding the solution to homogeneous systems. It is essential to understand the conditions for a matrix to be invertible, as well as the different methods available for finding the inverse of a matrix. In this article, we will discuss the various methods for finding the inverse of a matrix, including Gauss-Jordan elimination, LU decomposition, and the adjugate method.

The inverse of a matrix is a matrix that, when multiplied by the original matrix, results in the identity matrix. There are several methods available for finding the inverse of a matrix, each with its own advantages and disadvantages. We will discuss the different methods in detail, including their computational complexity and suitability for large matrices.

Properties and Characteristics of the Inverse of a Matrix

The inverse of a matrix is a fundamental concept in linear algebra that provides a unique solution to systems of linear equations. However, the inverse itself possesses distinct properties and characteristics that make it a crucial tool in various mathematical and scientific applications.

Uniqueness of the Inverse of a Matrix

The inverse of a matrix is unique, meaning that for a given matrix A, there exists only one matrix A^-1 such that AA^-1 = A^-1A = I, where I is the identity matrix. This uniqueness ensures that the inverse can be used to uniquely solve systems of linear equations.

Relation to the Matrix’s Transpose

The inverse of a matrix is closely related to its transpose (A^T). If a matrix A is invertible, then its transpose A^T is also invertible, and the inverse of the transpose is the transpose of the inverse: (A^T)^-1 = (A^-1)^T.

Matrix Addition and Scalar Multiplication

The inverse of a matrix is affected by matrix addition and scalar multiplication in the following ways:

The inverse of the sum of two matrices is not necessarily the sum of their inverses. However, the inverse of the product of two matrices is the product of their inverses in reverse order.

The inverse of a scalar-multiple of a matrix is the scalar-multiple of the inverse of the matrix.

Properties of the Inverse of a Matrix

-

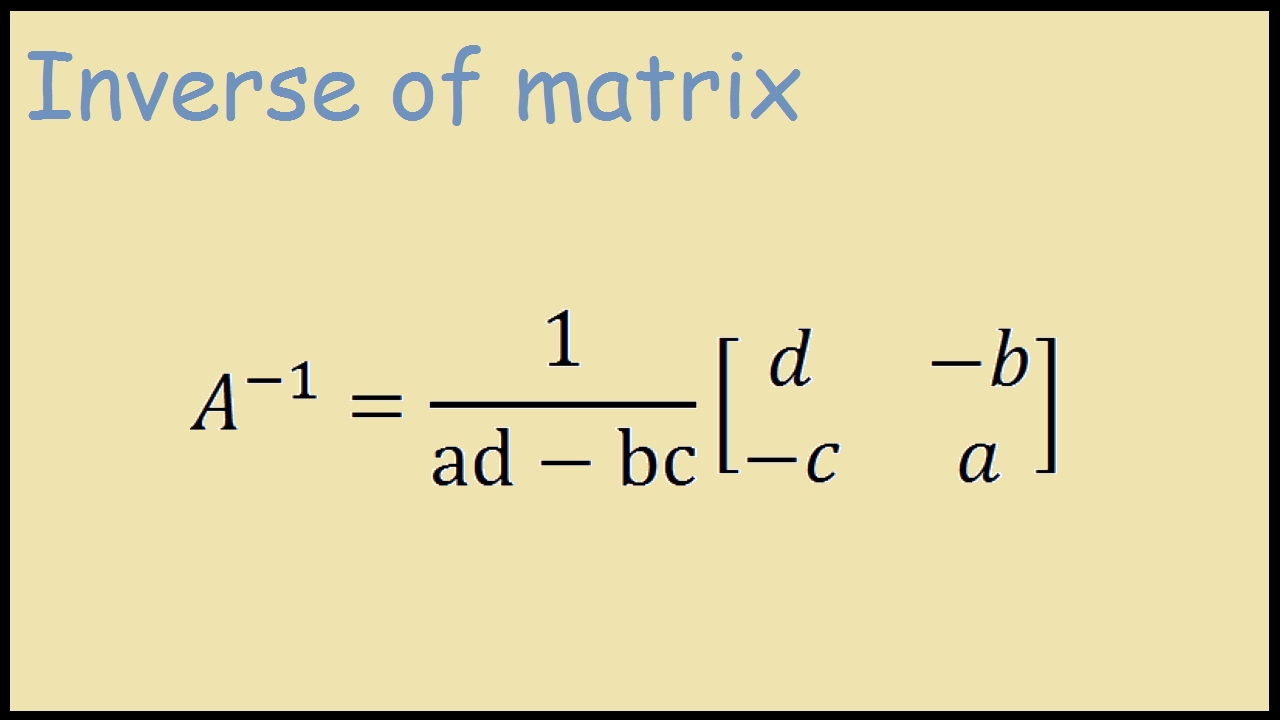

The inverse of a matrix A is denoted as A^-1, and it satisfies the following properties:

A*A^-1 = A^-1*A = I

det(A^-1) = 1/det(A)

adj(A) = (det(A))^n * A^-1 for an n x n matrix A

The inverse exists only if the determinant of A is non-zero. - The inverse of a matrix can be used to solve systems of linear equations. If a matrix A is invertible, then it can solve a system of linear equations of the form AX = B, where X is the solution vector and B is the constant vector.

- The inverse of a matrix can also be used to find the solution to homogeneous systems of linear equations, which is a set of linear equations that have a trivial solution (i.e., x = 0).

Matrix Equation AX = B

Matrix Equation AX = B

A matrix equation of the form AX = B can be solved using the inverse of the matrix A. If the inverse exists, then the solution to the equation is given by X = A^-1B.

Homogeneous Systems of Linear Equations

Homogeneous Systems of Linear Equations

A homogeneous system of linear equations is a set of linear equations that have a trivial solution. If a matrix A is invertible, then the homogeneous system AX = 0 has only the trivial solution X = 0.

Algorithms for Inverting Matrices

Inverting a matrix is a crucial operation in linear algebra, with applications in various fields such as physics, engineering, and computer science. There are several algorithms available for inverting matrices, each with its own strengths and weaknesses. In this section, we will discuss three popular algorithms for inverting matrices: Gauss-Jordan elimination, LU decomposition, and the adjugate method.

Gauss-Jordan Elimination Algorithm

The Gauss-Jordan elimination algorithm is a method for inverting a matrix by transforming it into the identity matrix using elementary row operations. The algorithm involves creating a pivot element in each row, then eliminating all other elements in the row below the pivot. This process is repeated for each row, resulting in the inverted matrix.

The key steps in the Gauss-Jordan elimination algorithm are:

- Create a pivot element in each row.

- Eliminate all other elements in the row below the pivot.

- Repeat the process for each row.

Example: Suppose we want to invert the matrix

| 2 | 1 |

| 4 | 3 |

. We start by creating a pivot element in the first row. Then, we eliminate all other elements in the row below the pivot, resulting in the matrix

| 1 | 0 |

| 1 | 1 |

. We repeat the process for the second row, resulting in the inverted matrix

| 0.125 | -0.125 |

| -0.25 | 0.25 |

.

LU Decomposition Algorithm

The LU decomposition algorithm is a method for inverting a matrix by decomposing it into lower and upper triangular matrices. The algorithm involves decomposing the original matrix into two triangular matrices, then solving for the inverted matrix using back-substitution.

The key steps in the LU decomposition algorithm are:

- Decompose the original matrix into lower and upper triangular matrices.

- Solve for the inverted matrix using back-substitution.

Example: Suppose we want to invert the matrix

| 2 | 1 |

| 4 | 3 |

. We decompose the matrix into lower and upper triangular matrices, resulting in the matrices

| 1 | 0 |

| 2 | 1 |

and

| 2 | 1 |

| 0 | 1 |

. We then solve for the inverted matrix using back-substitution, resulting in the inverted matrix

| 0.125 | -0.125 |

| -0.25 | 0.25 |

.

Adjugate Method

The adjugate method is a method for inverting a matrix by calculating the adjugate (also known as the classical adjugate) of the matrix. The adjugate is a matrix that is obtained by taking the determinant of the matrix and replacing each element with its cofactor.

The key steps in the adjugate method are:

- Calculate the determinant of the matrix.

- Replace each element with its cofactor to obtain the adjugate.

- Invert the adjugate to obtain the inverted matrix.

Example: Suppose we want to invert the matrix

| 2 | 1 |

| 4 | 3 |

. We calculate the determinant of the matrix, which is 4. We then replace each element with its cofactor to obtain the adjugate

| 3 | -1 |

| -1 | 2 |

. We invert the adjugate to obtain the inverted matrix

| 0.125 | -0.125 |

| -0.25 | 0.25 |

.

Applications of the Inverse of a Matrix in Data Analysis and Machine Learning

In the realm of data analysis and machine learning, the inverse of a matrix plays a crucial role in various applications, including regression, classification, and clustering. It enables researchers and practitioners to extract meaningful insights from complex data sets and make informed decisions. In this section, we will explore the applications of the inverse of a matrix in data analysis and machine learning, including its use in computing covariance matrices, solving optimization problems, and finding maximum likelihood estimates.

Computing Covariance Matrices and Inverse of a Covariance Matrix

The inverse of a matrix is essential in computing the covariance matrix, which is a fundamental concept in statistics. The covariance matrix represents the variance and covariance between different variables in a data set. By taking the inverse of the covariance matrix, researchers can compute the Mahalanobis distance, which is a measure of the distance between a data point and the mean of the data set, taking into account the covariance structure of the data. This is particularly useful in multivariate statistical analysis and machine learning.

- The covariance matrix is computed by dividing the difference between each data point and the mean of the data set by the number of data points.

- The inverse of the covariance matrix is then computed using various algorithms, such as Cholesky decomposition or LU decomposition.

- The Mahalanobis distance can be computed using the inverse of the covariance matrix as follows:

D(x, μ) = √((x – μ)^T Σ^-1 (x – μ))

where x is the data point, μ is the mean of the data set, Σ is the covariance matrix, and Σ^-1 is the inverse of the covariance matrix.

Solving Optimization Problems and Finding Maximum Likelihood Estimates

The inverse of a matrix is also essential in solving optimization problems and finding maximum likelihood estimates. In optimization problems, the inverse of a matrix is used to compute the Hessian matrix, which is a matrix of second partial derivatives of the objective function. The Hessian matrix is used to determine the direction of the steepest descent or ascent in the objective function. In machine learning, the inverse of a matrix is used to compute the maximum likelihood estimate of a model parameter, which is the value of the parameter that maximizes the likelihood of the data given the model.

- The Hessian matrix is computed by taking the partial derivatives of the objective function with respect to each parameter.

- The inverse of the Hessian matrix is then computed using various algorithms, such as Cholesky decomposition or LU decomposition.

- The maximum likelihood estimate of a model parameter can be computed using the inverse of the Hessian matrix as follows:

β = (X^T Σ^-1 X)^-1 X^T Σ^-1 y

where β is the model parameter, X is the design matrix, Σ is the covariance matrix, and y is the response variable.

Case Study: Using the Inverse of a Matrix in Image Recognition

In image recognition, the inverse of a matrix is used to compute the covariance matrix of the image features. The covariance matrix is then used to compute the Mahalanobis distance between the image features, which is a measure of the similarity between the image features. This is particularly useful in object recognition and face recognition.

D(x, μ) = √((x – μ)^T Σ^-1 (x – μ))

where x is the image feature, μ is the mean of the image features, Σ is the covariance matrix, and Σ^-1 is the inverse of the covariance matrix.

This is a real-world example of how the inverse of a matrix is used in image recognition, and how it can be applied to various fields such as computer vision, machine learning, and data analysis.

Numerical Methods for Inverting Matrices

Numerical methods for inverting matrices provide efficient and practical approaches to find the inverse of a matrix, particularly for large and complex matrices where exact methods may not be feasible. In addition to the traditional exact methods, numerical methods can provide accurate and stable results, making them a critical component in various scientific and engineering applications.

Numerical methods for inverting matrices can be categorized into two primary techniques: QR decomposition and singular value decomposition (SVD). Each method has its advantages and disadvantages, and the choice of method depends on the specific characteristics of the matrix and the application involved.

QR Decomposition, How to find the inverse of a matrix

QR decomposition is a popular numerical method for inverting matrices, particularly for symmetric or Hermitian matrices. This method involves decomposing the matrix into a product of an orthogonal matrix (Q) and an upper triangular matrix (R). The inverse of the matrix can then be computed by inverting the upper triangular matrix (R) and multiplying it by the orthogonal matrix (Q).

The QR decomposition method has several advantages:

*

- The method is relatively fast and efficient for large matrices.

- It is suitable for symmetric or Hermitian matrices, which are common in various applications such as data analysis and signal processing.

- The method provides a stable and accurate inversion of the matrix.

However, the QR decomposition method has some limitations:

*

- The method requires additional memory to store the orthogonal matrix (Q) and the upper triangular matrix (R).

- The method may not be as effective for ill-conditioned matrices, where the condition number is large.

Singular Value Decomposition (SVD)

SVD is another powerful numerical method for inverting matrices, particularly for matrices with a large condition number. This method involves decomposing the matrix into a product of three matrices: the left singular vectors, the singular values, and the right singular vectors. The inverse of the matrix can then be computed by inverting the singular values and multiplying them by the left and right singular vectors.

The SVD method has several advantages:

*

- The method is suitable for ill-conditioned matrices, where the condition number is large.

- The method provides a stable and accurate inversion of the matrix.

- The method can be used to compute the pseudoinverse of the matrix, which is useful in various applications such as least squares problems.

However, the SVD method has some limitations:

*

- The method is computationally more expensive than the QR decomposition method.

- The method requires additional memory to store the left and right singular vectors and the singular values.

Comparison of QR Decomposition and SVD

In general, the QR decomposition method is faster and more efficient than the SVD method for large matrices with a small condition number. However, the SVD method is more stable and accurate for ill-conditioned matrices or matrices with a large condition number. The choice of method depends on the specific characteristics of the matrix and the application involved.

In conclusion, numerical methods for inverting matrices provide efficient and practical approaches to find the inverse of a matrix, particularly for large and complex matrices. The QR decomposition and SVD methods are two popular numerical methods for inverting matrices, each with its advantages and disadvantages. The choice of method depends on the specific characteristics of the matrix and the application involved.

Outcome Summary

In conclusion, finding the inverse of a matrix is a crucial concept in linear algebra and its applications in scientific computing and data analysis. We have discussed the different methods available for finding the inverse of a matrix, including Gauss-Jordan elimination, LU decomposition, and the adjugate method. It is essential to understand the conditions for a matrix to be invertible and the computational complexity of each method. With this knowledge, readers can choose the most suitable method for their specific needs and efficiently find the inverse of a matrix.

Helpful Answers: How To Find The Inverse Of A Matrix

What is the condition for a matrix to be invertible?

A matrix must have a non-zero determinant and rank equal to the number of rows or columns for it to be invertible.

What are the different methods for finding the inverse of a matrix?

The different methods for finding the inverse of a matrix include Gauss-Jordan elimination, LU decomposition, and the adjugate method.

What is the computational complexity of Gauss-Jordan elimination?

The computational complexity of Gauss-Jordan elimination is O(n^3), where n is the number of rows or columns in the matrix.