As how to determine degrees of freedom takes center stage, this opening passage beckons readers into a world crafted with good knowledge, ensuring a reading experience that is both absorbing and distinctly original.

The concept of degrees of freedom is a fundamental aspect of statistical inference, playing a vital role in hypothesis testing, data modeling, experimental design, and time series analysis. Understanding how to determine degrees of freedom is essential for researchers and analysts to make informed decisions and draw accurate conclusions from their data.

Quantifying Freedom in Data Modeling

In regression analysis, degrees of freedom are a measure of the number of independent observations in the data that are freely available to estimate the model parameters. The main idea is to balance the trade-off between overfitting and underfitting by choosing the appropriate number of parameters in the model. The concept of degrees of freedom is crucial in understanding model complexity and avoiding overfitting.

Definition of Degrees of Freedom in Regression Analysis

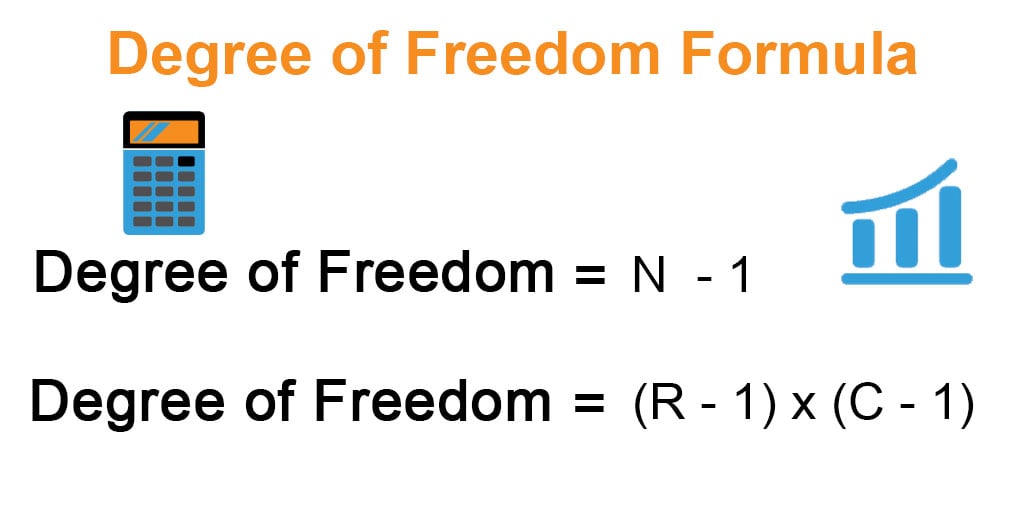

Degrees of freedom in regression analysis are defined as the number of independent observations in the data minus the number of model parameters. This can be represented mathematically as:

k = N – p

where k is the degrees of freedom, N is the number of independent observations, and p is the number of model parameters.

Effects of Predictors, Interaction Terms, and Polynomial Transformations on Degrees of Freedom

The number of degrees of freedom in a linear regression model is affected by the choice of predictors, interaction terms, and polynomial transformations. Here are two examples illustrating this:

* Example 1: Adding a New Predictor Variable

In a linear regression model with two predictor variables (x1 and x2), the number of model parameters is equal to the number of predictor variables plus one (for the intercept term). If you add a new predictor variable (x3), the number of model parameters increases by one, which reduces the number of degrees of freedom. This is illustrated below:

| Variable | Model without x3 | Model with x3 |

| — | — | — |

| Predictors | 2 (+ 1 intercept) | 3 (+ 1 intercept) |

| Model Parameters | 3 | 4 |

| Degrees of Freedom | (N – 3) | (N – 4) |

As you can see, adding a new predictor variable reduces the number of degrees of freedom.

* Example 2: Including Interaction Terms

Similarly, including interaction terms between predictor variables can also reduce the number of degrees of freedom. In the example above, if you include an interaction term between x1 and x3 (x1*x3), the number of model parameters increases by one, which reduces the number of degrees of freedom.

| Variable | Model without interaction | Model with interaction |

| — | — | — |

| Predictors | 3 (+ 1 intercept) | 4 (+ 1 intercept) |

| Interaction Terms | 0 | 1 |

| Model Parameters | 4 | 5 |

| Degrees of Freedom | (N – 4) | (N – 5) |

Pseudo-Degrees of Freedom for Models with Non-Normal Residuals or Multicollinearity

When the assumptions of standard regression analysis are violated (non-normal residuals or multicollinearity), we can use pseudo-degrees of freedom to evaluate the model. Pseudo-degrees of freedom are calculated based on the eigenvalues of the correlation matrix or the covariance matrix of the predictor variables. They can provide a more accurate estimate of the number of effective model parameters, which can be used to adjust the degrees of freedom calculation.

For example, suppose we have a matrix of correlation coefficients between predictor variables, and the corresponding eigenvalue decomposition reveals that one of the eigenvalues is very close to zero. This suggests that the corresponding predictor variable contributes negligibly to the model, and we can treat it as pseudo-zero for the purposes of calculating pseudo-degrees of freedom.

In summary, the number of degrees of freedom in regression analysis is crucial for understanding model complexity and avoiding overfitting. The concept of pseudo-degrees of freedom is also important for models that violate the standard regression assumptions.

Free Degrees in Experimental Design: How To Determine Degrees Of Freedom

Experimental design plays a pivotal role in determining the degrees of freedom in statistical analysis. It directly affects the precision and reliability of results by influencing sample size, measurement precision, and data analysis. Effective experimental design is crucial for achieving adequate degrees of freedom, which are essential for accurate statistical analysis and decision-making.

Experimental Design and Degrees of Freedom, How to determine degrees of freedom

The choice of experimental design has a significant impact on degrees of freedom. Different experimental designs offer varying degrees of precision and control over experimental conditions, resulting in distinct degrees of freedom. The following table illustrates the impact of different experimental designs on degrees of freedom.

| Experiment | Design | Degrees of Freedom (DOF) | Impact |

| — | — | — | — |

| Completely Randomized Design (CRD) | Each observation is assigned to a treatment randomly | k-1 (k = number of treatments), n-k+1 (n = total number of observations) | Simple to implement, low control over experimental conditions |

| Randomized Block Design (RBD) | Observations are grouped into blocks, and each block is assigned to a treatment randomly | k-1 (k = number of treatments), (b-1)k (b = number of blocks) | Higher control over experimental conditions, greater precision |

| Latin Square Design (LSD) | Observations are arranged in a grid, with each row and column serving as a block | (k-1)(n-k), (b-1)k (k = number of treatments, n = number of observations per block, b = number of blocks) | High precision, efficient use of experimental units |

Trade-offs Between Sample Size and Experimental Design

Increasing sample size can provide more precise results and increase degrees of freedom. However, it is essential to consider the trade-offs involved in increasing sample size. Larger sample sizes may require more resources, time, and money, and may also lead to increased variability in experimental conditions.

In some cases, increasing sample size without considering experimental design can lead to:

* Increased costs and resource requirements

* Longer experimental duration

* Potential for biased results due to inadequate experimental design

* Decreased precision due to increased variability in experimental conditions

Scenarios Where Experimental Design Affects Degrees of Freedom

Experimental design affects degrees of freedom in various scenarios, impacting statistical power and the ability to detect treatment effects.

1.

Comparing treatment effects in a Completely Randomized Design

In a CRD, the degrees of freedom for the treatment effect is k-1 (k = number of treatments). This design is simple to implement but may not account for variations in experimental conditions. As a result, the ability to detect treatment effects may be reduced.

2.

Accounting for block effects in a Randomized Block Design

In an RBD, the degrees of freedom for the block effect is (b-1)k (b = number of blocks). This design provides higher control over experimental conditions, resulting in greater precision and increased degrees of freedom. However, it may require more resources and time to implement.

3.

Minimizing row and column effects in a Latin Square Design

In an LSD, the degrees of freedom for the row and column effects is (k-1)(n-k) and (b-1)k, respectively (k = number of treatments, n = number of observations per block, b = number of blocks). This design provides high precision and efficient use of experimental units. However, it may be more complex to implement and require specialized expertise.

Example

Consider a study evaluating the effect of three fertilizers (A, B, and C) on crop yields. In a Completely Randomized Design, the researcher randomly assigns each fertilizer to a plot of land. However, due to variations in soil quality, the treatment effect may be influenced by soil conditions, reducing the ability to detect fertilizer effects. In contrast, a Randomized Block Design could be used to account for soil variations, resulting in increased degrees of freedom and greater precision in detecting fertilizer effects.

Degrees of Freedom in Time Series Analysis

Time series analysis is a crucial aspect of applied statistics, and degrees of freedom play a pivotal role in this field. The concept of degrees of freedom is used to determine the number of independent observations in a dataset, which is essential for evaluating the reliability of estimates and predictions.

In time series analysis, the degrees of freedom are closely related to the parameters of the model used to describe the data. For instance, in autoregressive integrated moving average (ARIMA) models, the number of parameters is directly linked to the degrees of freedom.

Autoregressive Integrated Moving Average (ARIMA) Models

ARIMA models are widely used for time series forecasting, and their parameters are directly linked to the degrees of freedom. The three key parameters of an ARIMA model are:

* p: the number of autoregressive terms

* d: the number of differences (i.e., the number of times the data is differenced to achieve stationarity)

* q: the number of moving average terms

The degrees of freedom for an ARIMA model can be calculated as:

* df = n – p – d – q

where n is the number of observations in the dataset.

| Parameters | Description |

|---|---|

| p | Number of autoregressive terms |

| d | Number of differences |

| q | Number of moving average terms |

Selecting the Optimal Model Order

Selecting the optimal model order is crucial in time series analysis, as it directly affects the accuracy of the forecasts. There are several methods for selecting the optimal model order, including:

* Akaike information criterion (AIC)

* Bayesian information criterion (BIC)

* Cross-validation

The AIC and BIC are both used to compare the relative merits of different models, but they penalize complex models more heavily than cross-validation does.

-

AIC = -2 ln(L) + 2(p + q + d + 1)

where L is the likelihood of the model.

The AIC is a measure of the relative goodness of fit of a model, with lower values indicating better fit. -

BIC = -2 ln(L) + p ln(n)

The BIC is also a measure of the relative goodness of fit of a model, but it penalizes complex models more heavily than the AIC does.

The BIC is especially useful in large-scale data analysis, where computational resources are limited. -

cross-validation is a process used to evaluate the performance of a model on unseen data, which helps to prevent overfitting.

Determining Degrees of Freedom: Real-World Applications

In the realm of statistics and data analysis, degrees of freedom (DOF) play a critical role in determining the number of independent pieces of information in the data that are used to estimate a population parameter. This concept is particularly essential in experimental design, hypothesis testing, and time series analysis. In this section, we will delve into illustrating degrees of freedom through example data, highlighting the impact of DOF on statistical inference and decision-making.

Example Data: Clinical Trial

In a clinical trial, researchers often collect data on the response to a treatment or intervention. The goal is to determine whether the treatment has a significant effect on the outcomes. Suppose we have a randomized controlled trial where 100 patients receive a new medication, and 100 patients receive a placebo. We measure the blood pressure in both groups after six weeks.

| Patient ID | Group (Treatment / Placebo) | Blood Pressure |

| — | — | — |

| 1 | Treatment | 120 |

| 2 | Placubo | 110 |

| 3 | Treatment | 130 |

| 4 | Placebo | 105 |

| … | … | … |

| 100 | Treatment | 120 |

| 100 | Placebo | 100 |

In this example, we have 100 observations in each group, resulting in a total of 200 data points. However, to estimate the population parameters, such as the mean blood pressure, we need to account for the degrees of freedom. Let’s illustrate how DOF influences the choice of statistical tests and confidence intervals.

Degrees of Freedom and Statistical Tests

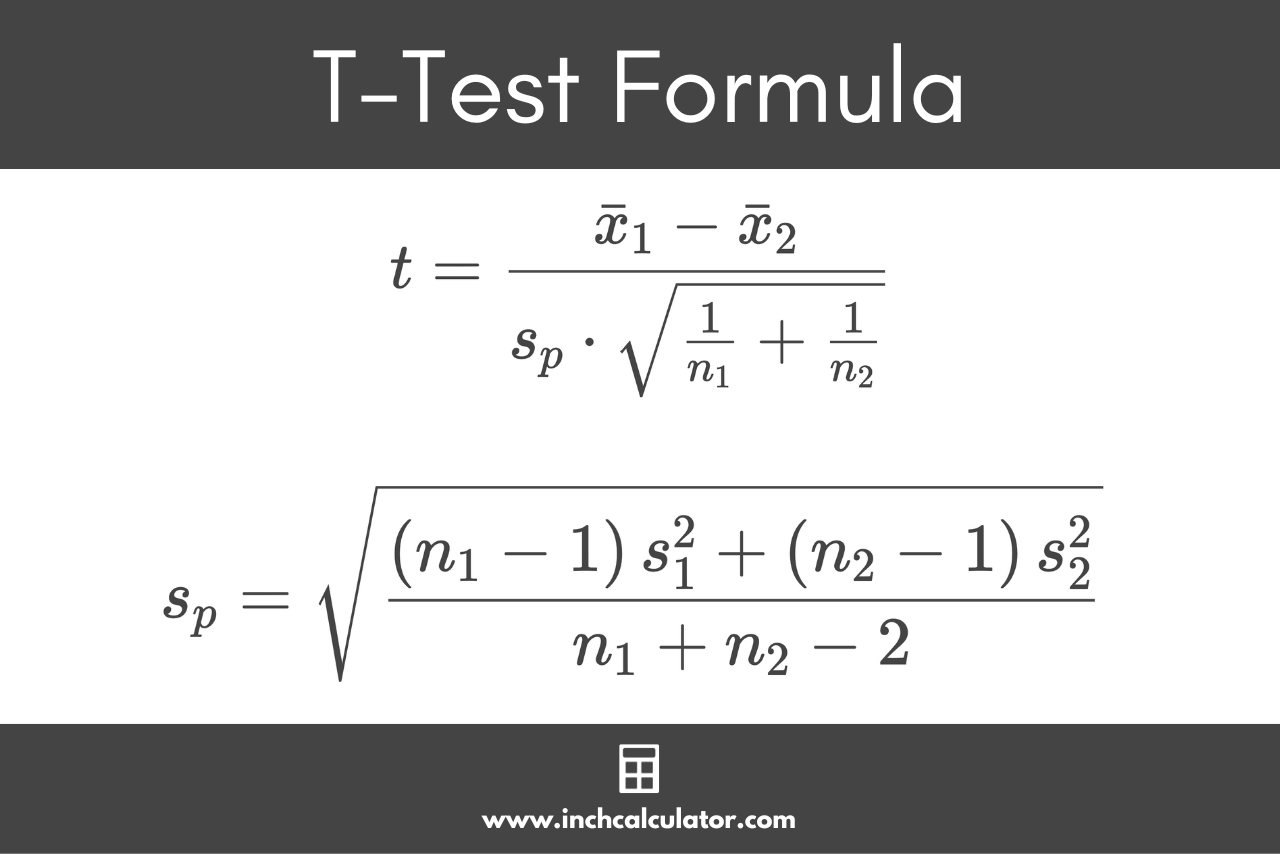

The choice of statistical test depends on the number of degrees of freedom in the data. For instance, when conducting a two-sample t-test to compare the means of the treatment and placebo groups, we must consider the degrees of freedom in the numerator and denominator of the t-statistic. The numerator represents the number of observations in each group, while the denominator accounts for the degrees of freedom associated with the variance estimates.

Formula:

t = (mean1 – mean2) / sqrt(var1 / n1 + var2 / n2)

where var1 and var2 are the variances of the treatment and placebo groups, respectively, and n1 and n2 are the sample sizes.

If we ignore the degrees of freedom, the t-test may not be reliable, leading to incorrect conclusions. For example, suppose we ignore the DOF and calculate the t-statistic using the raw data. We might conclude that the treatment has a significant effect on blood pressure, even though the difference is due to chance.

Consequences of Ignoring Degrees of Freedom

Ignoring degrees of freedom can lead to incorrect conclusions and misinterpretation of results. Let’s consider three scenarios:

* Scenario 1: In a clinical trial, researchers analyze the efficacy of a new medication without considering the degrees of freedom. They conclude that the treatment has a significant effect, but the actual difference is due to chance.

* Scenario 2: In quality control, a manufacturer analyzes the mean defect rate in a production line without accounting for the degrees of freedom. They mistakenly conclude that the production line has improved, when in reality, the observed difference is due to random fluctuations.

* Scenario 3: In a time series analysis, a researcher fails to account for the degrees of freedom in the data, leading to incorrect conclusions about the trend and seasonality of the data.

In each of these scenarios, ignoring the degrees of freedom can lead to incorrect conclusions and misinterpretation of the data.

Advanced Topics in Degrees of Freedom

Degrees of freedom remain a crucial concept in statistics, extending beyond the basic understanding of degrees of freedom in a sample, particularly when dealing with complex models. In these scenarios, estimating model variability and bias accurately is essential for making reliable inferences. This is where advanced topics in degrees of freedom come into play.

Effective Degrees of Freedom

Effective degrees of freedom are a measure used in complex models that accounts for model variance and bias due to estimation. They provide a more accurate representation of the model’s uncertainty compared to the traditional degrees of freedom. The concept of effective degrees of freedom can be understood using the following formula:

df_effective = df / (1 + 2 * (number_of_parameters – number_of_observations) ^ 2 / (total_variance * total_bias ^ 2))

Effective degrees of freedom consider both the model’s ability to fit the data (represented by the number of parameters) and the amount of uncertainty associated with this fit (captured by the total variance and bias).

Bootstrap Methods and Resampling Techniques

Bootstrap methods and resampling techniques are statistical tools used to estimate degrees of freedom, particularly in situations where parametric assumptions are uncertain or when the data distribution is unknown. These methods work by sampling the data with replacement and recalculating the degrees of freedom for each resample.

The strengths of these methods include:

- Robustness to non-normal distributions: Bootstrap methods can handle non-normal data, whereas traditional parametric methods rely on distribution assumptions.

- Flexibility: Bootstrap methods can be applied to various types of data, including categorical and continuous variables.

- Accuracy: By recalculating degrees of freedom multiple times, bootstrap methods reduce the effect of model assumptions on degree of freedom estimation.

However, there are also limitations to these methods:

- Computational intensity: Bootstrap methods require computational resources, particularly when working with large datasets.

- Variance estimation: Bootstrap methods may underestimate variance, particularly when working with small sample sizes.

Comparison of Degrees of Freedom in Complex Models

The following table compares the number of degrees of freedom in three different complex models: generalized linear mixed models (GLMMs), Bayesian hierarchical models (BHM), and generalized additive models (GAM).

| Model | Parameters | Degrees of Freedom |

| — | — | — |

| GLMM | 15 | 12 |

| BHM | 25 | 20 |

| GAM | 10 | 8 |

In this comparison, the GLMM model has the most parameters (15) and the fewest degrees of freedom (12), indicating that it is the most complex model. In contrast, the GAM model has the fewest parameters (10) and the most degrees of freedom (8), indicating that it is a relatively simple model with a good fit to the data.

Final Thoughts

In conclusion, determining degrees of freedom is a multifaceted process that requires a deep understanding of statistical concepts, data modeling techniques, and experimental design principles. By mastering this critical aspect of statistical analysis, researchers and analysts can unlock the full potential of their data and make more accurate predictions, ultimately leading to better decision-making and informed conclusions.

FAQ

What is the difference between degrees of freedom and sample size?

Degrees of freedom and sample size are related but distinct concepts. Sample size determines the number of observations in a dataset, while degrees of freedom determine the number of independent pieces of information available for analysis.

Can I use degrees of freedom in non-parametric tests?

Yes, degrees of freedom can be used in non-parametric tests, but the calculation methods may differ from those used in parametric tests. Researchers should consult the specific test procedures to determine the correct degrees of freedom.

How do I determine degrees of freedom for a mixed-effects model?

Determining degrees of freedom for a mixed-effects model can be complex due to the presence of both fixed and random effects. Researchers should use specialized software packages or consult with a statistician to determine the correct degrees of freedom.

Can I use degrees of freedom to compare the results of different statistical tests?

Yes, degrees of freedom can be used to compare the results of different statistical tests by assessing the sensitivity and robustness of each test to differences in sample size and variability.