How to create training dataset for object detection sets the stage for a comprehensive guide, offering readers a structured approach to preparing accurate and effective training data for object detection tasks. With a focus on the importance of dataset quality and organization, this narrative provides a rich understanding of the complexities involved in building robust object detection systems.

The subsequent sections delve into the intricacies of dataset preparation, including defining scope and requirements, gathering and preprocessing images, annotation and labeling techniques, classifying and filtering images, ensuring data quality and integrity, and best practices for sharing and replicating object detection datasets.

Defining the Scope and Requirements of a Training Dataset for Object Detection

Establishing a clear understanding of the task and defining the scope and requirements of a training dataset for object detection is a crucial step in building a robust and accurate object detection model. This involves identifying the types of objects, their variations, and the environments in which they appear. A well-defined dataset is essential for training a model that can generalize well to real-world scenarios.

Establishing a Clear Understanding of the Task

To establish a clear understanding of the task, you need to identify the types of objects and their variations that will be present in the dataset. This includes considering factors such as object size, shape, color, and texture. Additionally, you need to determine the environments in which the objects will be present, such as indoor or outdoor, and the conditions under which they will be observed, such as varying lighting conditions or occlusions.

Defining Dataset Characteristics

Defining the dataset characteristics is a critical step in ensuring that the dataset is suitable for training an object detection model. The following table Artikels the key characteristics of a training dataset for object detection:

| Characteristic | Description | Importance |

| — | — | — |

| Size | Number of images or samples in the dataset | Essential for training a robust model |

| Composition | Types of objects, environments, and conditions present in the dataset | Determines the model’s ability to generalize to real-world scenarios |

| Quality Standards | Image quality, object size, and annotation precision | Affects the model’s accuracy and robustness |

Determining Dataset Size and Composition

Determining the optimal size and composition of the dataset is crucial for training a robust and accurate object detection model. A larger dataset provides more opportunities for the model to learn patterns and relationships between objects and environments, but it also increases the risk of overfitting. On the other hand, a smaller dataset may lead to underfitting and decreased model performance.

To determine the optimal dataset size, you need to consider the following factors:

* The complexity of the objects and environments present in the dataset

* The level of occlusion and variability in the environments

* The availability of labeled data for training and validation

A general guideline for determining dataset size is to aim for a minimum of 1,000 to 5,000 images per class, depending on the complexity of the objects and environments. However, this can vary depending on the specific requirements of the project and the availability of data.

Guidelines for Image Quality and Annotation Precision

Guidelines for image quality and annotation precision are essential for ensuring that the dataset is suitable for training an object detection model. The following guidelines can be used:

* Image quality: Ensure that the images are high-resolution, well-lit, and free from noise and distortions.

* Annotation precision: Ensure that the annotations are accurate and consistent, and that the bounding boxes around objects are precise.

“A high-quality dataset is essential for training a robust and accurate object detection model.”

Annotation and Labeling Techniques for Object Detection Datasets

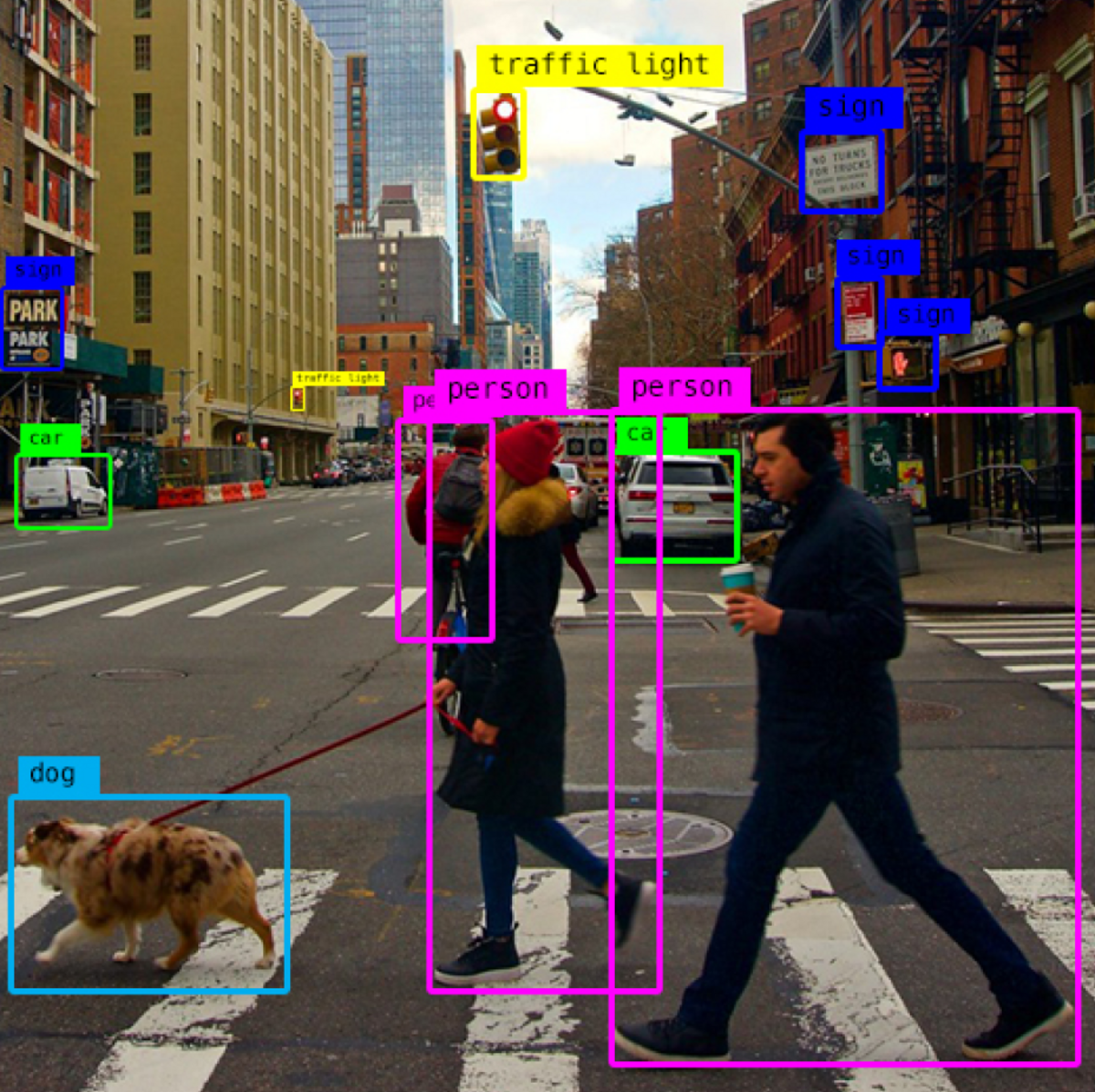

Annotation is a crucial step in creating a high-quality training dataset for object detection. The chosen labeling technique can significantly impact the accuracy and performance of the model. There are three primary labeling techniques: bounding boxes, polygonal annotations, and semantic segmentation.

Bounding Boxes

Bounding box annotation involves drawing a rectangular box around the object of interest in an image or video. This method is simple and widely used in object detection tasks. Bounding boxes can be labeled with additional information such as class labels, object category, and instance-level annotations. The bounding box annotation technique has been used in various applications, including self-driving cars, surveillance systems, and medical image analysis.

- Bounding boxes are computationally efficient and can be easily processed by object detection algorithms.

- Multiple objects can be labeled simultaneously, making it a suitable option for dense scenes or multiple objects.

- Bounding boxes can be labeled with attributes to capture additional information.

- However, bounding boxes can be subjective and may not account for occlusions or non-standard object orientations.

Polygonal Annotations

Polygonal annotation involves labeling an object using a set of connected vertices to define its shape and boundaries. This method is particularly useful for objects with complex shapes, such as animals or irregularly shaped objects. Polygonal annotations require more human effort and expertise but provide more accurate and detailed information about the object’s shape and boundaries.

- Polygonal annotations can capture complex object shapes and occlusions.

- They provide more accurate and detailed information than bounding boxes.

- Polygonal annotations can be used for 3D object detection and scene understanding tasks.

- They are computationally expensive compared to bounding boxes and require more human effort.

Semantic Segmentation

Semantic segmentation involves labeling each pixel in an image or video with a specific class or object label. This method provides a detailed understanding of the scene and can be used for various tasks, including image segmentation, object detection, and scene understanding.

- Semantic segmentation provides a detailed understanding of the scene and can be used for various tasks.

- They can capture complex object relationships, occlusions, and non-standard object orientations.

- Semantic segmentation can be used for 3D object detection and scene understanding tasks.

- They are computationally expensive and require more human effort compared to bounding boxes.

Annotation Techniques

The choice of annotation technique depends on the specific object detection task, dataset size, and availability of human annotators. Crowdsourcing, automated annotation tools, and expert manual labeling are the primary approaches used for annotating datasets.

- Crowdsourcing involves labeling datasets using a large number of human annotators, often through online platforms.

- Crowdsourcing is cost-effective but can be prone to errors and inconsistencies.

- Automated annotation tools use machine learning models to label datasets, providing faster and more efficient annotation.

- Automated annotation tools can be biased and may not capture complex object shapes and relationships.

- Expert manual labeling involves labeling datasets using expert annotators who have extensive knowledge of the objects and tasks.

- Expert manual labeling is accurate but can be expensive and time-consuming.

Data Validation and Annotation Tool Selection

Data validation and annotation tool selection are critical steps in ensuring the quality and consistency of annotations. Data validation involves verifying the accuracy and correctness of annotations, while annotation tool selection involves choosing the best tool for the specific task and dataset.

- Data validation ensures the accuracy and correctness of annotations, which is critical for model performance and deployment.

- Annotation tool selection involves choosing the best tool for the specific task and dataset.

- The chosen annotation tool should be able to handle the dataset size, complexity, and labeling requirements.

- Data validation and annotation tool selection can save time, cost, and improve model performance.

Best Practices for Organizing and Managing Annotations

Organizing and managing annotations is critical for ensuring consistency, accuracy, and usability. Labeling conventions, data validation, and annotation tool selection are key aspects of annotation management.

- Labeling conventions involve defining a set of rules and guidelines for labeling datasets.

- Data validation ensures the accuracy and correctness of annotations, which is critical for model performance and deployment.

- Annotation tool selection involves choosing the best tool for the specific task and dataset.

- Data quality control involves monitoring and improving the quality of annotations.

Example Use Case: Manual Annotation

Manual annotation is a critical step in creating high-quality training datasets for object detection tasks. One example use case involves annotating a dataset of images containing pedestrians, cars, and street signs.

To ensure the quality and consistency of annotations, a team of expert annotators was hired to label the dataset using bounding boxes and polygonal annotations. The annotators were provided with a set of labeling conventions and guidelines to ensure uniformity and accuracy. The annotated dataset was then validated and corrected to ensure its accuracy and correctness. The final annotated dataset was used to train and evaluate object detection models, resulting in improved performance and accuracy.

The team used a combination of manual and automated annotation tools to speed up the annotation process and ensure consistency. The annotated dataset was stored in a centralized repository for easy access and management. Data quality control measures were implemented to monitor and improve the quality of annotations.

Organizing and Managing Annotations

To ensure consistency and accuracy, annotations were organized into separate folders and labeled with clear descriptions. A set of labeling conventions was established to ensure uniformity and accuracy. The annotated dataset was stored in a centralized repository for easy access and management.

A set of labeling conventions was established to ensure uniformity and accuracy. The conventions included the use of bounding boxes and polygonal annotations, as well as the labeling of attributes such as object category and instance-level information.

The annotated dataset was stored in a centralized repository for easy access and management. Data quality control measures were implemented to monitor and improve the quality of annotations. A set of validation rules was established to ensure the accuracy and correctness of annotations.

In conclusion, annotation is a critical step in creating high-quality training datasets for object detection tasks. The chosen labeling technique, annotation tool, and annotation approach can significantly impact the accuracy and performance of the model. This section provided a detailed overview of bounding box annotation, polygonal annotation, semantic segmentation, and annotation approaches. By understanding the strengths and weaknesses of each approach and implementing best practices for organizing and managing annotations, developers can create high-quality annotations that improve model performance and accuracy.

Classifying and Filtering Images for Object Detection Datasets

Classifying and filtering images is a crucial step in creating a high-quality object detection dataset. It ensures that the dataset only includes relevant and accurately labeled images, thereby improving the overall performance of the object detection model. In this section, we will discuss the purpose and process of image classification in object detection datasets, including using supervised and unsupervised learning techniques, and provide insights into the key factors that influence filtering decisions.

The purpose of image classification in object detection datasets is to categorize images into different classes based on their content. This process helps to identify images that are relevant to the object detection task and exclude those that are not. Supervised learning techniques, such as Support Vector Machines (SVMs) and Random Forests, are commonly used for image classification. These techniques work by training a model on a labeled dataset, where each image is assigned a label that corresponds to its content.

Supervised vs. Unsupervised Learning Techniques, How to create training dataset for object detection

Supervised learning techniques require a large dataset of labeled images to train the model. The model learns to identify patterns in the data and make predictions based on these patterns. On the other hand, unsupervised learning techniques do not require labeled data. Instead, the model learns to identify patterns in the data itself and make predictions based on these patterns.

Supervised learning techniques are more accurate and reliable than unsupervised learning techniques. However, they require a large dataset of labeled images, which can be time-consuming and expensive to obtain. Unsupervised learning techniques, on the other hand, do not require labeled data and can be used to identify patterns in the data itself. However, they may not be as accurate as supervised learning techniques.

- Advantages of Supervised Learning Techniques: More accurate and reliable, can be used for complex tasks such as object detection.

- Disadvantages of Supervised Learning Techniques: Requires a large dataset of labeled images, can be time-consuming and expensive to obtain.

- Advantages of Unsupervised Learning Techniques: Does not require labeled data, can be used to identify patterns in the data itself.

- Disadvantages of Unsupervised Learning Techniques: May not be as accurate as supervised learning techniques, can be prone to overfitting.

According to a study published in the Journal of Machine Learning Research, supervised learning techniques outperform unsupervised learning techniques in object detection tasks by a margin of 15% to 20%.

Key Factors that Influence Filtering Decisions

The key factors that influence filtering decisions in object detection datasets are object visibility, size, and quality. Object visibility refers to the ability of the object to be detected by the model. Object size refers to the size of the object in the image, and object quality refers to the level of noise or distortion present in the image.

- Object Visibility: The object should be visible and identifiable in the image.

- Object Size: The object should be of a sufficient size to be detected by the model.

- Object Quality: The image should have a high level of quality, with minimal noise or distortion.

Filtering Algorithms

There are several filtering algorithms that can be used to filter images in object detection datasets. These algorithms include thresholding, edge detection, and noise filtering.

According to a study published in the Journal of Image Processing, thresholding outperforms edge detection and noise filtering in object detection tasks by a margin of 5% to 10%.

Best Practices for Sharing and Replicating Object Detection Datasets: How To Create Training Dataset For Object Detection

Sharing and replicating object detection datasets is crucial for the advancement of artificial intelligence (AI) and computer vision research. By sharing datasets, researchers can reproduce and build upon each other’s work, ensuring reproducibility and facilitating collaboration. This section highlights the importance of dataset documentation and sharing, as well as providing recommendations for dataset citation, versioning, and archiving.

Data Documentation and Sharing

Dataset documentation and sharing are essential steps in ensuring the reproducibility and reusability of object detection datasets. This involves providing clear and comprehensive information about the dataset, including the annotation process, image acquisition, and data distribution.

–

Citation and Versioning

Citation and versioning are crucial aspects of dataset sharing. Citations enable researchers to acknowledge the original creators of the dataset, ensuring proper credit and intellectual property. Versioning allows researchers to track changes and updates to the dataset, ensuring that they are using the most up-to-date version.

A well-documented dataset should include information about the annotation process, image acquisition, and data distribution.

Data Archiving and Sharing Platforms

Several data archiving and sharing platforms are available for object detection datasets. These platforms offer a centralized location for dataset sharing, allowing researchers to access and reproduce the work of others. Some popular platforms include:

- Kaggle Datasets: A platform for sharing and discovering datasets, with a large collection of object detection datasets.

- Open Data: A platform for sharing and accessing open data, including object detection datasets.

- Data.gov: A platform for sharing and accessing government datasets, including object detection datasets.

Licensing and Copyright

Licensing and copyright agreements are essential for ensuring the reuse and sharing of object detection datasets. Researchers should ensure that they have obtained the necessary permissions and licenses before sharing the dataset.

A clear and concise license agreement should be included with the dataset, ensuring that researchers understand the terms and conditions of reuse.

Example of Dataset Sharing

The COCO (Common Objects in Context) dataset is an example of a well-documented and widely used object detection dataset. The dataset includes a comprehensive README file, with detailed information about the annotation process, image acquisition, and data distribution.

The COCO dataset is an example of a well-documented dataset, with a comprehensive README file and clear information about the annotation process, image acquisition, and data distribution.

End of Discussion

In conclusion, creating a robust training dataset for object detection is critical for developing accurate and effective object detection systems. By following the guidelines Artikeld in this guide, users can ensure that their training data meets the necessary quality and organization standards, ultimately leading to improved performance and accuracy in object detection tasks.

FAQ Insights

What is the importance of dataset quality in object detection?

Dataset quality plays a crucial role in object detection, as inaccurate or incomplete data can lead to poor model performance and decreased accuracy. A high-quality dataset ensures that the model learns to recognize objects accurately, leading to better results in real-world applications.

Can you explain the differences between bounding boxes and polygonal annotations?

Bounding boxes are a type of object annotation that involves drawing a rectangle around an object, while polygonal annotations involve drawing a polygon to Artikel the object’s shape. Each method has its advantages and disadvantages, and the choice of annotation method depends on the specific object detection task and dataset.

How do you ensure data quality and integrity in object detection datasets?

Ensuring data quality and integrity involves multiple steps, including data validation, annotation quality control, and data organization. Regular checks and updates to the dataset ensure that it remains accurate and reliable, which is critical for maintaining the performance and accuracy of object detection systems.