As how to do matrix multiplication takes center stage, this opening passage beckons readers with an engaging introduction into a world crafted with in-depth knowledge, ensuring a reading experience that is both absorbing and distinctly original. With a solid grasp of matrix multiplication, you’ll unlock the secrets of this powerful mathematical technique that underlies many scientific and engineering applications. But before we dive into the intricacies of matrix multiplication, let’s take a step back and examine the foundational concepts that make this tool so versatile and useful.

The fundamental concept of matrix multiplication lies at the heart of linear algebra, and yet, it is a topic that can intimidate even the most seasoned mathematicians and engineers. But fear not, for we’re here to demystify the process and provide a clear guide on how to perform matrix multiplication with confidence and ease. So, buckle up and get ready to embark on a fascinating journey through the world of matrix multiplication.

Matrix Size and Dimensionality

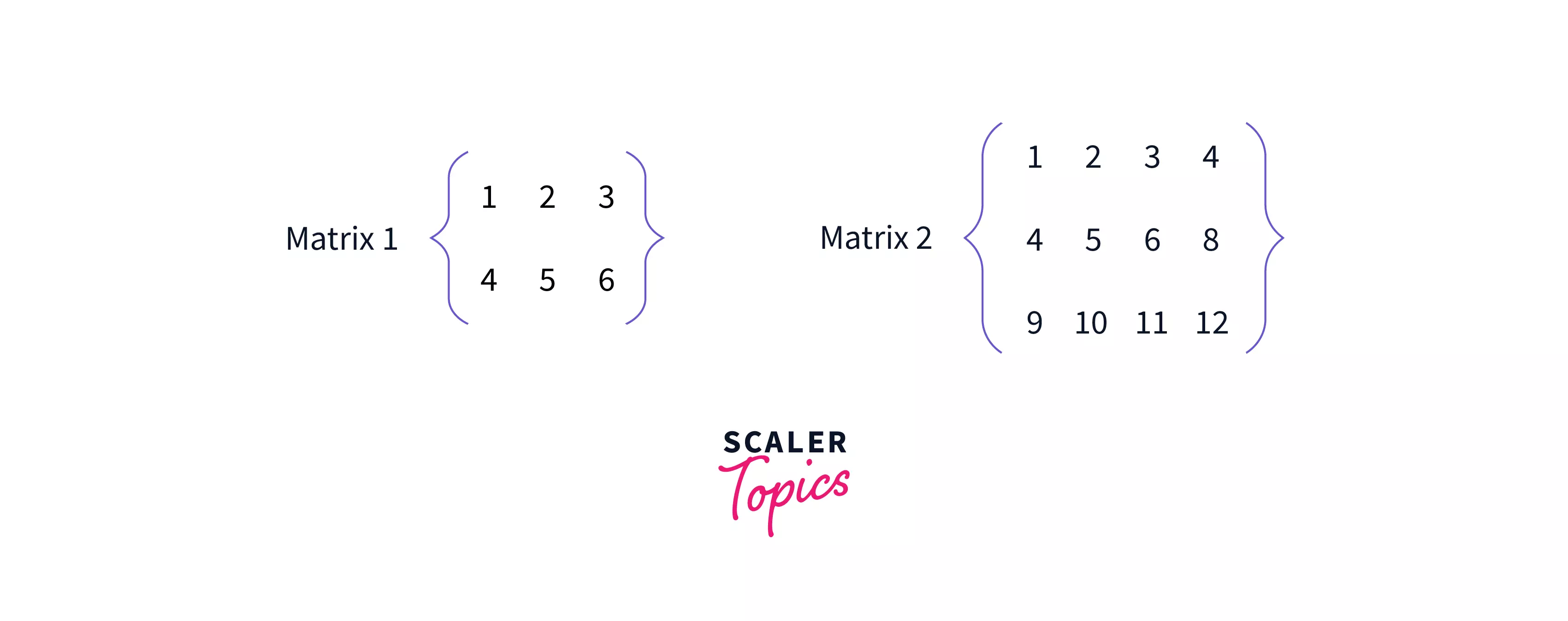

Matrix size and dimensionality play a crucial role in matrix multiplication, as they determine whether two matrices are compatible for multiplication. In this section, we will explore how matrix dimensions affect multiplication and introduce the concept of compatible matrices.

Matrix dimensions refer to the number of rows and columns in a matrix. Typically, a matrix is represented as a 2D array of numbers, where each row represents one row of the matrix, and each column represents one column of the matrix.

When multiplying two matrices, the number of columns in the first matrix must match the number of rows in the second matrix. For example, consider two matrices A (3×2) and B (2×4). Since the number of columns in matrix A (2) matches the number of rows in matrix B (2), the matrices are compatible for multiplication.

Matrix Compatibility for Multiplication

To determine if two matrices are compatible for multiplication, we need to check if the number of columns in the first matrix matches the number of rows in the second matrix. If this condition is met, the matrices can be multiplied.

For example, consider the following matrices:

A = \beginbmatrix1 & 2\\3 & 4\\5 & 6\endbmatrix (3×2)

B = \beginbmatrix7 & 8 & 9 & 10\\11 & 12 & 13 & 14\endbmatrix (2×4)

In this case, the number of columns in matrix A (2) matches the number of rows in matrix B (2), so the matrices are compatible for multiplication.

Examples of Incompatible Matrices

On the other hand, if the number of columns in the first matrix does not match the number of rows in the second matrix, the matrices are incompatible for multiplication. For example, consider the following matrices:

A = \beginbmatrix1 & 2\\3 & 4\\5 & 6\endbmatrix (3×2)

C = \beginbmatrix7 & 8 & 9\\11 & 12 & 13\endbmatrix (2×3)

In this case, the number of columns in matrix A (2) does not match the number of rows in matrix C (2), so the matrices are incompatible for multiplication.

Conclusion, How to do matrix multiplication

In conclusion, matrix size and dimensionality play a crucial role in matrix multiplication. Two matrices can only be multiplied if the number of columns in the first matrix matches the number of rows in the second matrix. We can use this knowledge to determine whether two matrices are compatible for multiplication and to identify incompatible matrices.

The dimensions of a matrix are denoted by row(x) and column(y), where x represents the number of rows and y represents the number of columns.

Matrix Multiplication Algorithms

Matrix multiplication is a fundamental operation in linear algebra, enabling the computation of the product of two matrices. This process is widely used in various fields, including physics, engineering, computer science, and data analysis. To multiply two matrices, we follow a set of steps that ensure accurate and efficient computation.

Step 1: Matrix Dimensionality Check

The first step in matrix multiplication is to verify that the matrices meet the required dimensionality for multiplication. Specifically, if A is an (m × n) matrix and B is an (n × p) matrix, then the product AB is a valid (m × p) matrix. This step ensures that we are multiplying compatible matrices, avoiding errors in dimensionality.

Step 2: Initialize the Resultant Matrix

Once we confirm that the matrices are compatible for multiplication, we create a new (m × p) matrix, denoted as C, which will store the result of the multiplication. This matrix is initially filled with zeros, and each element c_ij will be computed during the multiplication process.

Step 3: Multiply Corresponding Elements

We iterate through the rows of the first matrix A and the columns of the second matrix B. For each element a_ij in A and b_jk in B, we multiply the corresponding elements and sum the results to obtain the element c_ik in the resultant matrix C. This process is repeated for each element in both matrices.

Step 4: Store the Result

After computing all the elements in the resultant matrix C, we store the final values in the matrix. The resulting matrix C now represents the product of the original matrices A and B.

Step 5: Verification and Validation

As a final step, we verify that the matrix multiplication was performed correctly by checking the dimensions and values of the resultant matrix. If any issues or discrepancies arise, we may need to revisit the earlier steps or check for errors in the input matrices.

Matrix Multiplication Algorithms

There are several matrix multiplication algorithms available, each with its strengths and weaknesses. Here are a few popular algorithms used for efficient matrix multiplication:

1. Naive Matrix Multiplication Algorithm

This algorithm involves iterating through each element of both matrices, computing the dot product of corresponding rows and columns, and storing the result in the resultant matrix. The time complexity of this algorithm is O(n^3), making it less efficient for large matrices.

2. Strassen’s Matrix Multiplication Algorithm

Strassen’s algorithm, developed by Volker Strassen in 1969, is a divide-and-conquer approach that reduces the time complexity of matrix multiplication to O(n^2.81). This algorithm splits the matrices into smaller sub-matrices, computes the product of these sub-matrices, and then combines the results to obtain the final product.

3. Coppersmith-Winograd Algorithm

This algorithm, developed in 1981, is another divide-and-conquer approach that further reduces the time complexity of matrix multiplication to O(n^2.376). Coppersmith and Winograd’s algorithm builds upon Strassen’s approach by introducing a new recursive formula for matrix multiplication.

Comparison of Algorithms

In comparing these matrix multiplication algorithms, we consider factors such as time complexity, scalability, and computational efficiency. While Naive Matrix Multiplication is the simplest approach, it is less efficient for large matrices. Strassen’s algorithm offers significant improvements in time complexity, making it a popular choice for many applications. Coppersmith-Winograd’s algorithm further reduces the time complexity, but its implementation is more complex and may require specialized libraries.

The choice of matrix multiplication algorithm depends on the specific requirements of the application, including the size of the matrices, the desired level of computational efficiency, and the availability of computational resources.

Matrix Multiplication with Zero Pivots

Matrix multiplication is a fundamental operation in linear algebra, and it is widely used in various applications such as data analysis, machine learning, and computer graphics. However, matrix multiplication can sometimes encounter issues when dealing with zero pivots. In this section, we will discuss the challenges of dealing with zero pivots during matrix multiplication and provide strategies for overcoming these challenges.

The Problems Caused by Zero Pivots

A zero pivot is a value of zero on the diagonal of a matrix. When a matrix has a zero pivot, it can cause problems during matrix multiplication. One of the main issues is that it can lead to singular or ill-conditioned matrices, which can be difficult to work with.

The presence of zero pivots can also cause division by zero errors during matrix multiplication, which can result in incorrect or NaN (Not a Number) values in the resulting matrix. Additionally, zero pivots can also cause numerical instability, which can propagate errors in the calculation.

Examples and Scenarios Where Zero Pivots May Occur

Zero pivots can occur in various scenarios, including:

- When dealing with sparse matrices, where some elements are missing or zero.

- When working with matrices that have been created from noisy or incomplete data.

- When performing numerical computations that involve rounding errors or approximations.

- When working with large matrices that have a high degree of correlation between their elements.

Solutions for Handling Zero Pivots

To overcome the challenges associated with zero pivots, there are several strategies that can be employed:

- Use techniques such as pivoting or permutation to rearrange the elements of the matrix and avoid zero pivots.

- Use regularization techniques, such as Tikhonov regularization or L1 regularization, to penalize zero pivots and improve the numerical stability of the calculation.

- Use iterative methods, such as the Gauss-Seidel method or the SOR method, to solve linear systems and avoid division by zero errors.

- Use more robust numerical methods, such as the QR algorithm or the singular value decomposition (SVD), to handle matrices with zero pivots.

In summary, zero pivots can cause problems during matrix multiplication, leading to singular or ill-conditioned matrices, division by zero errors, and numerical instability. However, there are several strategies that can be employed to overcome these challenges and achieve accurate and reliable results.

Matrix Multiplication with Non-Integer Elements

In matrix multiplication, elements can be any real or complex numbers, not limited to integers. This flexibility allows for a wide range of applications, particularly in fields that involve continuous variables or precise calculations. Matrix multiplication with non-integer elements can be challenging, especially when dealing with floating-point arithmetic or decimal expansions.

Mathematical Principles Underlying Matrix Multiplication with Non-Integer Elements

In matrix multiplication, each element of the resulting matrix is a weighted sum of the elements from the input matrices. When elements are non-integers, the calculations involve decimal arithmetic, which can lead to issues with precision and rounding errors. This is particularly relevant when working with high-precision arithmetic or when dealing with matrices that require a large number of decimal places in their elements.

For a matrix product C = AB, where C is of size m x p, each element c_ij of C is calculated as c_ij = ∑_k=1^n a_ik \* b_kj

The accuracy of the calculations can be improved by using specialized data types and arithmetic libraries, such as IEEE 754 floating-point numbers or decimal arithmetic packages. Additionally, techniques like numerical stability, truncation, and rounding can be employed to reduce the impact of rounding errors.

Practical Applications and Scenarios

There are several areas where matrix multiplication with non-integer elements is crucial. Some examples include:

- Computer graphics and rendering, where matrix operations are used to transform and manipulate 3D models, often involving floating-point calculations.

- Signal processing and image analysis, where matrix operations are used to filter and transform signals, often with non-integer coefficients.

- Machine learning and data analytics, where matrix operations are used to perform tasks like regression, classification, and clustering, often involving non-integer weights and coefficients.

- Control systems and robotics, where matrix operations are used to model and analyze the behavior of complex systems, often with non-integer parameters and coefficients.

In these and other areas, matrix multiplication with non-integer elements is a fundamental operation, enabling the solution of complex problems and the simulation of real-world phenomena. By understanding the mathematical principles and practical applications of matrix multiplication with non-integer elements, we can develop more accurate and efficient numerical methods and computational tools.

Example Applications

Consider a simple signal processing example, where a 2D matrix A represents a signal and matrix B represents a filter. The matrix product C = AB represents the filtered signal. In this scenario, A and B contain non-integer entries, and the product C requires decimal arithmetic to compute accurate results. By using specialized data types and numerical libraries, we can ensure the accuracy and stability of the calculations.

Another example is in computer graphics, where matrix operations are used to transform and manipulate 3D models. The transformations involve non-integer coefficients and require decimal arithmetic to achieve accurate results.

Conclusion, How to do matrix multiplication

Matrix multiplication with non-integer elements is a critical operation in a wide range of applications, from computer graphics and signal processing to machine learning and control systems. By understanding the mathematical principles underlying matrix multiplication with non-integer elements and exploring the practical scenarios where these operations are used, we can develop more accurate and efficient numerical methods and computational tools.

Parallelizing Matrix Multiplication: How To Do Matrix Multiplication

Parallelizing matrix multiplication is a crucial step in improving the performance of large-scale computations. As matrices grow in size, the computational complexity of matrix multiplication increases exponentially, making it a significant bottleneck in many applications. By exploiting parallel processing capabilities, such as multi-core processors or distributed computing architectures, we can significantly reduce the computation time and make matrix multiplication more efficient.

Strategies for Parallelizing Matrix Multiplication

Parallelizing matrix multiplication involves splitting the computation into smaller tasks that can be executed concurrently by multiple processing units. There are several strategies for achieving this, including:

- Blocking: One of the most common strategies for parallelizing matrix multiplication is by blocking. This involves dividing the input matrices into smaller sub-matrices, called blocks, that can be processed independently by different processors. The key idea is to group the elements of the matrix into blocks that fit in the cache hierarchy of the processor, minimizing memory access times. By blocking, we can reduce the number of memory accesses and take advantage of cache locality.

- Data Partitioning: Another approach to parallelizing matrix multiplication is by partitioning the input data among different processors. This involves dividing the matrices into smaller sub-matrices or vectors that can be processed independently by different processors. Data partitioning can be performed in a round-robin manner, where each processor receives a portion of the data, or by dividing the data based on a predetermined criterion, such as the value or the index of the elements.

- Thread-Level Parallelism: Fewer processors are available in most of the systems nowadays, but threads could be used for better utilization; threads are smaller units of the process and they can be managed with ease in the system as compared to processes. These threads can run concurrently and thus, thread level parallelism is useful for matrix multiplication.

By using these strategies, we can significantly improve the performance of matrix multiplication on parallel architectures.

Comparison of Parallelization Strategies

Each of the parallelization strategies has its own advantages and disadvantages, and the choice of strategy depends on the specific application and the underlying architecture. Here, we compare the performance of the different strategies:

- Blocking versus Data Partitioning: Both blocking and data partitioning can be used to parallelize matrix multiplication, but blocking is generally more efficient for matrix multiplication because it takes advantage of cache locality. Data partitioning, on the other hand, is more flexible and can be used for more complex computations.

- Thread-Level Parallelism: Thread level parallelism can be more efficient due to its lower overheads in comparison with process-level parallelism and thus, can be used for many matrix operations.

Implementation of Parallel Matrix Multiplication

The implementation of parallel matrix multiplication involves several key steps:

- Blocking: Implement blocking by dividing the input matrices into smaller sub-matrices, called blocks, that fit in the cache hierarchy of the processor.

- Data Partitioning: Implement data partitioning by dividing the input data among different processors.

- Thread-Level Parallelism: Implement thread-level parallelism by creating multiple threads that execute concurrently.

- Synchronization: Ensure that the threads synchronize their updates to the result matrix to avoid data inconsistencies.

By following these steps, we can effectively parallelize matrix multiplication and improve its performance on parallel architectures.

Final Review

The art of matrix multiplication is a powerful tool that requires a deep understanding of the underlying mathematical principles. By grasping the basics, size and dimensionality, algorithms, and visualizing the process, you’ll be able to tackle any matrix multiplication problem that comes your way. Whether you’re a student, researcher, or engineer, mastering matrix multiplication will open doors to new and exciting applications in science, engineering, and beyond. So, keep practicing, and soon you’ll be matrix multiplication master.

FAQs

Q: What is the difference between matrix multiplication and matrix addition?

A: Matrix multiplication is a way of combining the elements of two matrices to produce a new matrix, whereas matrix addition is a simple operation that involves adding corresponding elements of two matrices.

Q: Can I multiply two matrices if they have different dimensions?

A: No, two matrices cannot be multiplied if they have different dimensions, as this would result in a non-square matrix. However, if one of the matrices has a row dimension that matches the column dimension of the other matrix, then the matrices are compatible for multiplication.

Q: What is the dot product in matrix multiplication?

A: The dot product is a mathematical operation that multiplies the elements of two vectors to produce a scalar value. In matrix multiplication, the dot product is used to compute the inner product of two vectors, which results in a scalar value.