Kicking off with how to find outliers, this opening paragraph is designed to captivate and engage the readers. The detection of outliers in large datasets is a crucial aspect of data analysis, as it helps identify data points that deviate significantly from the norm.

The presence of outliers can have a significant impact on the accuracy and reliability of statistical models. Therefore, it is essential to employ effective methods for detecting outliers, such as the Z-score, Modified Z-score, and Density-based methods.

Techniques for detecting anomalies in large datasets

Detecting anomalies in large datasets is a crucial task in various fields such as finance, healthcare, and marketing. Anomalies or outliers are data points that are significantly different from the rest of the data. Identifying and understanding these anomalies is essential to prevent fraudulent transactions, diagnose diseases, and optimize business processes. There are several techniques for detecting anomalies in large datasets, each with its strengths and limitations.

Z-score Method

Z-score, also known as the standard score or z-score, measures how many standard deviations an element is from the mean. It is calculated as the number of standard deviations from the mean. A high Z-score indicates that the data point is far from the mean, and a low Z-score indicates that the data point is close to the mean. The Z-score method is simple to implement and is widely used in many applications.

Z = (Xi – μ) / σ

Where Z is the Z-score, Xi is the individual data point, μ is the mean of the dataset, and σ is the standard deviation.

The Z-score method has several strengths, such as being easy to understand and implement, and it is widely used in many applications. However, it has some limitations, such as being sensitive to outliers and non-normal distributions.

The Modified Z-score method is an extension of the Z-score method. It takes into account the median and interquartile range (IQR) instead of the mean and standard deviation. This method is less sensitive to outliers and non-normal distributions compared to the Z-score method.

Modified Z-score = 0.6745 * (Xi – median) / (IQR / 1.4826)

Where Modified Z-score is the modified Z-score, Xi is the individual data point, median is the median of the dataset, and IQR is the interquartile range.

The Modified Z-score method is more robust than the Z-score method but can be more computationally intensive.

Density-based Methods

Density-based methods, such as DBSCAN (Density-Based Spatial Clustering of Applications with Noise), identify clusters based on density and proximity. They are more robust than Z-score and Modified Z-score methods and can handle non-normal distributions and high-dimensional data.

DBSCAN algorithm:

1. Choose an epsilon value that determines the maximum distance between two points in the same cluster.

2. Choose a minimum points value that determines the minimum number of points required to form a dense region.

3. Create a data structure that stores the points in the dataset.

4. For each point in the dataset:

a. Find the k-nearest neighbors of the point within the epsilon distance.

b. If the number of neighbors is greater than or equal to the minimum points, then the point is a core point and forms the center of a cluster.

c. If the number of neighbors is less than the minimum points, then the point is an outlier.

5. Repeat the process for each point in the dataset.

Dense-based methods are more robust than Z-score and Modified Z-score methods but can be more computationally intensive.

Real-world Applications and Examples, How to find outliers

The techniques discussed above are commonly used in various real-world applications such as:

| Dataset | Method | Description | Example |

|---|---|---|---|

| Fraud Detection | Z-score and Modified Z-score methods | ID transactions with unusual patterns in a bank’s dataset, such as a large transaction from an unknown location. | A customer’s bank account has an unusual transaction of $10,000 from an unknown location in a month. |

| Healthcare | Density-based methods | ID patients with unusual medical histories, such as a patient with multiple diagnoses but no apparent cause. | A patient has multiple diagnoses but no apparent cause, requiring further investigation by healthcare professionals. |

| Marketing | Z-score and Modified Z-score methods | ID customers with unusual purchase patterns, such as a customer who purchases a product frequently but returns it often. | A customer purchases a product weekly but returns it twice, indicating potential fraud. |

Identifying unusual patterns in time-series data

When working with time-series data, identifying unusual patterns can be crucial in understanding trends, detecting anomalies, and making informed decisions. This can be particularly challenging in scenarios where data is noisy, incomplete, or exhibits complex patterns.

Time-series data involves sequences of data points measured at regular time intervals, making it a challenging task to identify unusual patterns. Various algorithms and techniques can be employed to detect anomalies in time-series data.

Comparing statistical and machine learning approaches

Statistical methods are often used for identifying outliers in time-series data due to their simplicity and interpretability. Common statistical techniques include the use of mean, median, and standard deviation to detect anomalies. However, statistical methods may not effectively capture complex patterns or relationships within the data.

Machine learning algorithms, on the other hand, offer more robust and precise solutions for identifying outliers in time-series data. By using techniques such as regression analysis, clustering, and autoregressive integrated moving average (ARIMA) models, machine learning algorithms can uncover intricate relationships and patterns within the data.

However, machine learning algorithms often require extensive computational resources and may be prone to overfitting, especially with complex data sets.

Machine Learning Models for Anomaly Detection in Time-Series Data

Below are two machine learning models and their architectures that can be used for anomaly detection in time-series data.

### 1. LSTM Networks

LSTM (Long Short-Term Memory) networks are a type of Recurrent Neural Network (RNN) known for their ability to learn and model long-term dependencies in time-series data. They can be used for predicting future values and identifying anomalies in time-series data.

Architecture Overview:

– Input Layer: Time-series data

– LSTM Layer: One or more LSTM layers

– Output Layer: Linear or Softmax layer for prediction and anomaly detection

Data Preprocessing Requirements:

– Normalize and scale data

– Window size selection

### 2. Autoencoder

Autoencoders are neural networks that learn to compress and reconstruct input data, often used for dimensionality reduction and anomaly detection. They can be particularly useful when dealing with high-dimensional time-series data.

Architecture Overview:

– Input Layer: Time-series data

– Encoder Layer: Compressing data into a lower-dimensional representation

– Decoder Layer: Reconstructing compressed data

– Output Layer: Linear or Softmax layer for prediction and anomaly detection

Data Preprocessing Requirements:

– Normalize and scale data

– Feature selection (depending on the complexity of the data)

Real-World Applications of Outlier Detection in Time-Series Data

Outlier detection has numerous applications in real-world scenarios where time-series data plays a crucial role.

For instance, in finance, identifying outliers in stock prices can help anticipate market fluctuations., which can lead to significant investment losses when left undetected.

In weather forecasting, identifying unusual patterns in temperature and precipitation data can help predict extreme weather events, enabling early warnings and evacuation plans. In the context of demand forecasting, identifying anomalies in sales data can help retailers adjust their inventory and pricing strategies.

For example, a company manufacturing and selling high-temperature thermometers might observe unusual spikes in their sales data on a Monday after Thanksgiving in the United States. This can help identify trends related to Black Friday shopping and enable the company to prepare for the expected sales surge.

By leveraging both statistical and machine learning techniques, organizations can unlock the full potential of their time-series data, staying ahead of market fluctuations and other events through timely detection and analysis of unusual patterns.

Understanding the role of outliers in decision-making processes

When dealing with large datasets, outliers can significantly influence decision-making processes in various fields, including business, healthcare, and social sciences. These unusual data points can either provide valuable insights or lead to inaccurate conclusions, depending on how they are managed and utilized. Effective outlier detection and analysis can greatly enhance the accuracy of data-driven decisions, but improper handling can result in flawed conclusions.

In various fields, outliers can impact decision-making in numerous ways. For instance, in healthcare, identifying outliers can help doctors and researchers understand the root causes of anomalies in patient data, facilitating the development of more effective treatments and personalized care plans.

Potential impact of outliers on data-driven decisions

The presence of outliers can significantly alter the results of data analysis, potentially leading to both positive and negative outcomes.

-

Benefits:

- Improved accuracy: Outliers can help identify anomalies in data, thereby improving the accuracy of data-driven decisions.

- Enhanced understanding: Analyzing outliers can provide deeper insights into the underlying patterns and relationships within a dataset.

- Early warning systems: Detecting outliers can serve as an early warning system for potential issues or anomalies, enabling proactive measures to be taken.

-

Drawbacks:

- Biased conclusions: Ignoring outliers can lead to biased conclusions and inaccurate decisions.

- Inaccurate predictions: Failing to account for outliers can result in inaccurate predictions and poor forecast performance.

- Missed opportunities: Neglecting outliers can cause valuable insights and potential opportunities to be overlooked.

Examples of outlier detection in decision-making processes

Let’s consider a hypothetical business scenario to illustrate the benefits of using outlier detection in decision-making processes.

A Business Case: Identifying High-Risk Customers

Imagine a retail business with a large customer database. By analyzing customer purchase history and behavior, the company aims to identify high-risk customers who are more likely to default on payments.

| Scenario | Goal | Method | Outcome |

|---|---|---|---|

| Identifying high-risk customers | Predict customer default risk | Outlier detection and analysis of customer purchase history | Identification of high-risk customers for targeted prevention measures |

| Enhancing marketing campaigns | Personalized marketing messages | Anomaly-based segmentation of customer data | Increased relevance and effectiveness of marketing campaigns |

| Streamlining operations | Efficient supply chain management | Outlier detection in sales and inventory data | Improved forecasting and inventory management |

| Improving customer service | Enhanced customer experience | Anomaly-based identification of customer complaints | Timely resolution of customer issues, improved satisfaction ratings |

By leveraging outlier detection and analysis, companies can make more informed decisions, identify potential issues early on, and enhance their overall performance.

Effective outlier detection and analysis can greatly enhance the accuracy of data-driven decisions, but improper handling can result in flawed conclusions. By understanding the role of outliers in decision-making processes, businesses and organizations can make better use of their data and drive more informed decision-making.

Best Practices for Handling and Visualizing Outliers

When it comes to handling and visualizing outliers, there are several key steps to follow in order to effectively identify and understand these unusual data points. Outliers can have a significant impact on the accuracy of machine learning models and statistical analysis, so it’s essential to address them accordingly.

Data Cleaning and Transformation

Data cleaning and transformation are crucial steps in preparing your data for outlier detection and visualization. This involves checking for missing values, outliers, and data inconsistencies, as well as transforming your data into a suitable format for analysis. For example, if you have a dataset with a mix of categorical and numerical variables, you may need to perform data normalization or standardization to ensure that all variables are on the same scale.

Data Normalization and Standardization

Data normalization and standardization are common techniques used to transform data into a more suitable format for analysis. Normalization involves scaling values to a common range, usually between 0 and 1, while standardization involves scaling values to have a mean of 0 and a standard deviation of 1. This can help to reduce the impact of outliers and make your data more comparable.

Feature Selection and Dimensionality Reduction

Feature selection and dimensionality reduction are techniques used to reduce the number of variables in your dataset. This can help to prevent overfitting and improve the stability of your models. There are several techniques available, including correlation analysis, mutual information, and principal component analysis.

Visualizing Outliers

Visualizing outliers is an essential step in understanding their impact on your data. There are several types of visualizations that can be used to highlight outliers, including box plots, scatter plots, and heatmaps. Each of these visualizations has its strengths and limitations, which are discussed below:

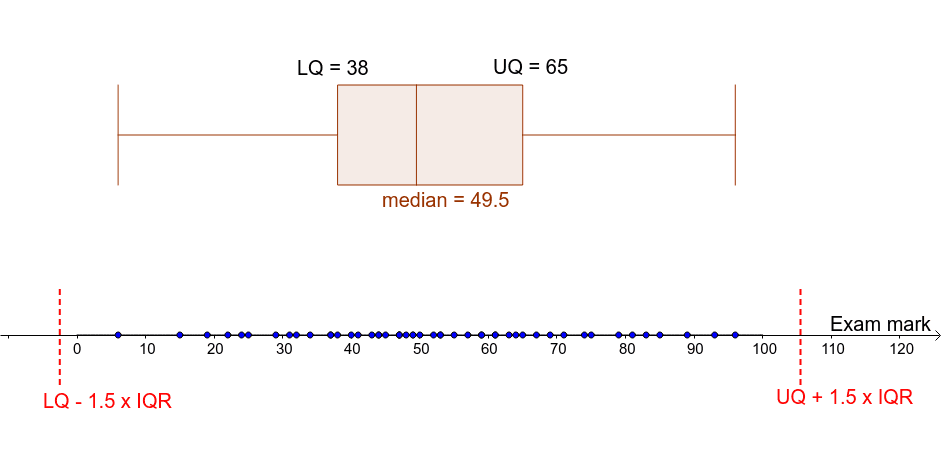

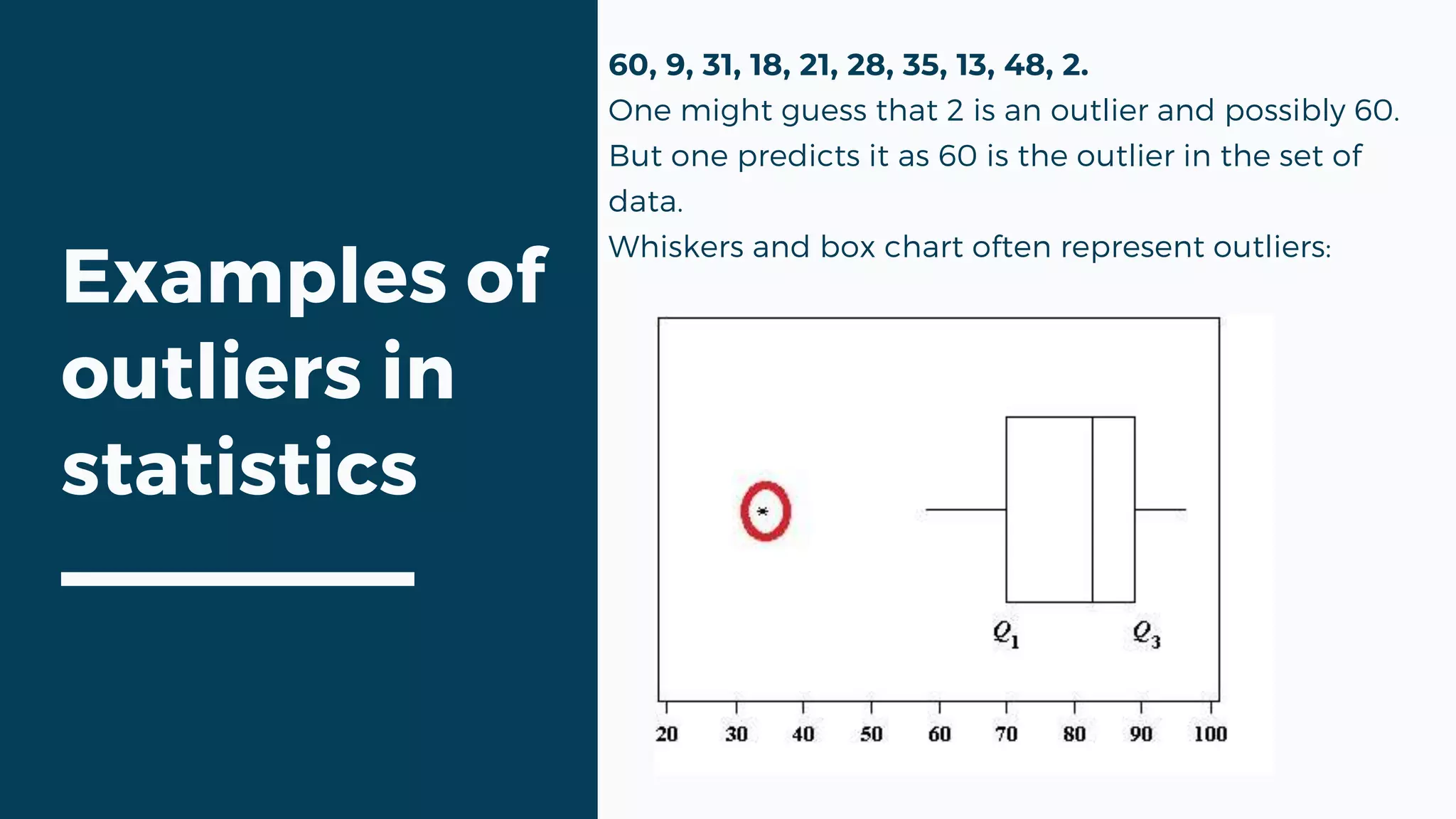

Box Plots

Box plots are a popular visualization technique for highlighting outliers. They consist of a box that shows the interquartile range (IQR), which is the difference between the 75th and 25th percentiles. Outliers are typically represented as individual points that fall outside of this range.

Scatter Plots

Scatter plots are a useful visualization technique for highlighting relationships between variables. They consist of a series of points that represent the relationship between two variables. Outliers can be clearly identified as points that fall far away from the main cluster.

Heatmaps

Heatmaps are a useful visualization technique for highlighting patterns and relationships in large datasets. They consist of a matrix of values that are represented as colors. Outliers can be clearly identified as points that stand out from the rest of the data.

Visualization Tools

There are several visualization tools available that can be used to create interactive visualizations of outliers. Three popular tools are Tableau, Power BI, and ggplot2.

Tableau

Tableau is a popular data visualization tool that allows users to connect to a wide range of data sources and create interactive visualizations. It has a user-friendly interface and a wide range of visualization options, including box plots, scatter plots, and heatmaps.

Power BI

Power BI is a business analytics service by Microsoft that allows users to connect to a wide range of data sources and create interactive visualizations. It has a user-friendly interface and a wide range of visualization options, including box plots, scatter plots, and heatmaps.

ggplot2

ggplot2 is a popular data visualization library in R that allows users to create a wide range of visualizations. It has a user-friendly interface and a wide range of visualization options, including box plots, scatter plots, and heatmaps.

- Use a combination of data cleaning and transformation techniques to prepare your data for outlier detection and visualization.

- Use visualization tools like Tableau, Power BI, and ggplot2 to create interactive visualizations of outliers.

- Use data normalization and standardization to transform your data into a suitable format for analysis.

- Use feature selection and dimensionality reduction techniques to reduce the number of variables in your dataset.

- Use visualization techniques like box plots, scatter plots, and heatmaps to highlight outliers and understand their impact on your data.

Best Practices

Here are some best practices for handling and visualizing outliers:

-

Data normalization and standardization are essential steps in preparing your data for outlier detection and visualization.

-

Use a combination of data cleaning and transformation techniques to prepare your data for outlier detection and visualization.

-

Use visualization tools like Tableau, Power BI, and ggplot2 to create interactive visualizations of outliers.

-

Use data normalization and standardization to transform your data into a suitable format for analysis.

-

Use feature selection and dimensionality reduction techniques to reduce the number of variables in your dataset.

-

Use visualization techniques like box plots, scatter plots, and heatmaps to highlight outliers and understand their impact on your data.

-

Interpret your results in the context of your research question or business problem.

Concluding Remarks: How To Find Outliers

In conclusion, finding outliers in datasets is a complex task that requires the use of various techniques and tools. By understanding the strengths and limitations of each method, data analysts can select the most appropriate approach for their specific use case. Remember, outlier detection is a critical step in ensuring the accuracy and reliability of statistical models.

Question Bank

Q: What is an outlier, and how is it detected?

An outlier is a data point that lies outside the typical range of values in a dataset. It can be detected using various methods, including statistical tests, machine learning algorithms, and visual inspection.

Q: What is the difference between an extreme value and an outlier?

An extreme value is a data point that has an unusually large or small value compared to the rest of the dataset, but it may not necessarily be an outlier if it lies within the expected range of values. An outlier, on the other hand, is a data point that lies outside the expected range of values.

Q: How can outliers affect the accuracy of statistical models?

Outliers can significantly impact the accuracy of statistical models by skewing the results and making it difficult to draw reliable conclusions. It is essential to detect and handle outliers carefully to ensure the accuracy and reliability of statistical models.

Q: Can outliers be beneficial in certain situations?

Yes, outliers can be beneficial in certain situations, such as in the detection of rare events or in the identification of unusual patterns in data. However, they can also be indicators of errors or anomalies in the data, which need to be carefully examined and addressed.

Q: How can I visualize outliers in a dataset?

You can visualize outliers in a dataset using various types of plots, such as box plots, scatter plots, and histograms. These plots can help you identify data points that lie outside the expected range of values and understand the distribution of the data.