How to read micrometers sets the stage for the importance of precision in measurement. A micrometer is a vital tool in various industries, including engineering, manufacturing, and quality control. Properly understanding and utilizing this tool is crucial in achieving accurate measurements.

In this article, we will delve into the essential steps of reading a micrometer and its applications in different fields. Whether you are a beginner or an experienced user, this guide will provide you with the necessary knowledge to work efficiently with micrometers. From understanding the basics to advanced techniques, this comprehensive guide will walk you through the journey of becoming a confident micrometer user.

Understanding the Fundamentals of Micrometers

Micrometers are precision measuring instruments used to accurately measure small dimensions. They find extensive applications in various industries such as engineering, manufacturing, and quality control. In this section, we will delve into the basic working principles, main components, and types of micrometers available in the market.

Basic Working Principles of Micrometers

A micrometer is a precision measuring instrument that consists of a precision ground spindle with a serrated anvil at one end and a precision ground spindle at the other. The working principle of a micrometer is based on the principle of a lever, where the force applied to the spindle is multiplied by the length of the lever arm to achieve high precision measurements. When the spindle is turned, the anvil moves towards the spindle, and the distance between the anvil and the spindle is proportional to the amount of spindle rotation.

The micrometer works on the principle of a screw thread, where the spindle advances in a thread by a specific amount for each rotation of the thimble. This screw thread is precisely ground to a specific pitch and thread angle to ensure accurate measurements. The precision of the micrometer is achieved by the precision of the screw thread and the anvil.

Micrometers are available in various types, including inside micrometers, outside micrometers, and depth micrometers. The choice of micrometer depends on the specific application and the type of measurement to be taken.

Main Components of a Micrometer

A micrometer consists of several main components, each with a specific function.

- The Anvil: The anvil is the moving jaw of the micrometer, which is precisely ground to a specific surface finish. The anvil moves towards the spindle when the spindle is turned.

- The Spindle: The spindle is the stationary jaw of the micrometer, which is also precisely ground to a specific surface finish. The spindle is connected to the thimble and advances in a thread by a specific amount for each rotation of the thimble.

- The Thimble: The thimble is the rotating handle of the micrometer, which advances the spindle in a thread by a specific amount for each rotation. The thimble has a precision ground surface to ensure accurate measurements.

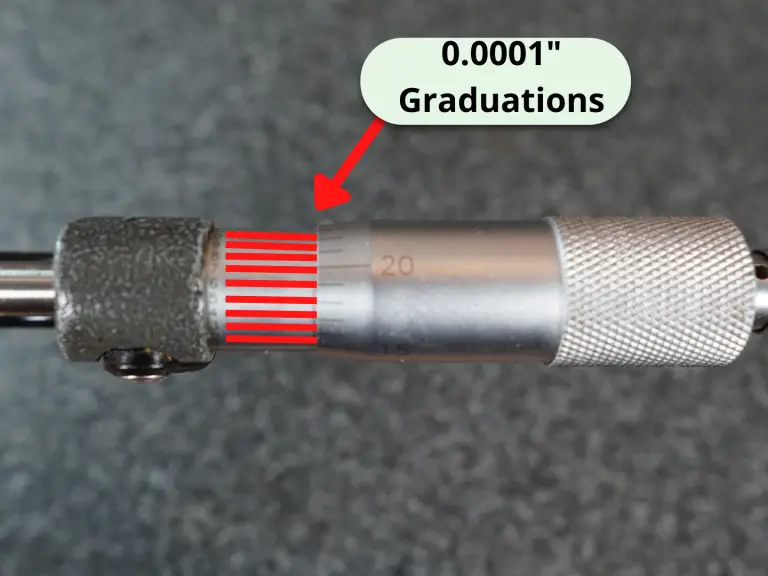

- The Graduated Scale: The graduated scale is the part of the micrometer that indicates the distance between the anvil and the spindle. The graduated scale is precisely calibrated to ensure accurate measurements.

Types of Micrometers

Micrometers are available in several types, each with specific applications and features.

- Inside Micrometers: Inside micrometers are used to measure the internal dimensions of objects, such as holes and bores. They have a precision ground spindle and anvil to ensure accurate measurements.

- Outside Micrometers: Outside micrometers are used to measure the external dimensions of objects, such as lengths and widths. They have a precision ground spindle and anvil to ensure accurate measurements.

- Depth Micrometers: Depth micrometers are used to measure the depth of recesses and holes. They have a precision ground spindle and anvil to ensure accurate measurements.

- Digital Micrometers: Digital micrometers are used to measure small dimensions with high accuracy and precision. They have a digital display to indicate the measurement and are often used in industries where high precision is required.

Calibration of Micrometers

Calibration of micrometers is essential to ensure accurate measurements. Calibration involves comparing the micrometer measurements with a standards instrument, such as a precision gauge block, to ensure that the micrometer is within the specified accuracy limits.

A calibration routine for a micrometer should include the following steps:

- Compare the micrometer measurements with a standards instrument, such as a precision gauge block.

- Check the micrometer for any signs of wear or damage.

- Verify that the micrometer is properly calibrated and meets the specified accuracy limits.

Precautions When Using Micrometers

When using micrometers, it is essential to follow some precautions to ensure accurate measurements and safe operation.

- Ensure that the micrometer is properly calibrated and meets the specified accuracy limits.

- Use the micrometer in a well-lit area with minimal distractions.

- Ensure that the object being measured is properly aligned with the micrometer.

- Use the micrometer with caution and follow the manufacturer’s instructions.

Accurate measurements are crucial in many industries, such as engineering, manufacturing, and quality control. The choice of micrometer depends on the specific application and the type of measurement to be taken. Ensuring proper calibration and following precautions when using micrometers are essential for accurate measurements and safe operation.

Setting Up and Adjusting Micrometers

Proper calibration and adjustment of micrometers are crucial for obtaining accurate measurements. A micrometer’s precision is only as good as its calibration, and even minor errors can significantly impact the results. This discusses the importance of proper calibration, step-by-step procedures for setting up and adjusting micrometers, and common calibration methods.

Importance of Proper Calibration

Proper calibration ensures that the micrometer provides accurate and reliable measurements. Calibration involves adjusting the micrometer to ensure that its measurements correspond to the actual dimensions of the objects being measured. If a micrometer is not properly calibrated, its readings may be off by significant margins, leading to errors and potentially costly mistakes in engineering, manufacturing, and other industries that rely on precise measurements.

Common Calibration Methods:

Step-by-Step Process of Setting Up and Adjusting Micrometers

To set up and adjust a micrometer for precise readings, follow these steps:

- Ensure the micrometer is in good working condition. Check for any signs of wear, damage, or corrosion. Clean the micrometer and its parts, if necessary.

- Choose the correct range for the measurement, if the micrometer has interchangeable anvil and spindle assemblies.

- Align the anvil with the object being measured and ensure it is firmly seated.

- Slowly turn the spindle until it just touches the object, ensuring that the object does not move.

- Continue to turn the spindle until it just clears the object, creating the smallest possible gap between the spindle and the anvil.

- Read the measurement from the dial gauge on the micrometer, taking into account any adjustments made for the anvil and spindle assemblies.

Calibration Methods and Procedures

There are several calibration methods and procedures for micrometers, including:

| Method | Procedure | Advantages |

|---|---|---|

| NIST-Traceable Calibration | Using a NIST-traceable standard, calibrated to a national standard, to compare with the micrometer under test | High accuracy and reliability, widely accepted in industry |

| Compare Gauge Calibration | Comparing the micrometer under test to a known, calibrated gauge | |

| Calibration Certificates | Examining calibration certificates from the manufacturer or a third-party calibration laboratory to verify calibration status | Quick reference, relatively low cost |

According to the American Society for Testing and Materials (ASTM), “calibration is the comparison of a device under test to a standard reference device or standard reference value to determine its measurement accuracy.”

Techniques for Reading Micrometer Measurements

When taking precise measurements using a micrometer, several techniques must be employed to ensure accuracy. The process involves careful preparation, attention to detail, and understanding of the measuring instrument itself. A good micrometer operator must be able to read and interpret the measurements correctly, which requires a combination of skill and knowledge.

Focusing on the Measuring Surface

To take accurate measurements, it is essential to focus on the measuring surface of the micrometer. The measuring surface is where the measurement is taken, and any distortion or parallax error can lead to incorrect readings. When holding the micrometer, place your eye directly above the scale and focus on the point where the anvils meet. This ensures that the measurement is taken on the correct surface and minimizes the risk of parallax error. Additionally, use the eyepiece to adjust the image so that it is parallel to the measuring surface. This alignment enables the operator to view the measurement accurately and reduces the likelihood of errors caused by parallax.

Avoiding Parallax Errors

Parallax errors occur when the micrometer is not aligned correctly, causing the measurement to appear different than it actually is. To avoid parallax errors, ensure that the micrometer is held at a 90-degree angle to the measuring surface. This alignment provides a clear and unobstructed view of the measurement, allowing the operator to read it accurately. Additionally, use the micrometer handle to adjust the position of the measuring anvils and ensure that they are square to the measuring surface. When reading the measurement, use the eyepiece to focus on the point where the anvils meet. This ensures that the measurement is taken on the correct surface and minimizes the risk of parallax error.

Verifying Measurements

After taking a measurement, it is essential to verify it to ensure accuracy. One method of verification is to use a reference point, such as a known dimension or a precision gauge. By comparing the micrometer measurement to a known standard, the operator can confirm that the reading is accurate. Another method of verification is to check the measurement against a calibration standard. This involves comparing the micrometer reading to a known standard, such as a precision gauge or a certified dimension. To check the calibration standard, use a precision gauge to measure the known dimension and compare it to the micrometer reading. If the measurements are not the same, the micrometer may need to be calibrated.

Common Verification Techniques

There are several common verification techniques that can be used to check the accuracy of micrometer measurements. These techniques include:

- Using a precision gauge to measure a known dimension

- Checking the measurement against a calibration standard

- Verifying the measurement against a reference point, such as a known dimension or a precision gauge

- Repeating the measurement multiple times to ensure consistency and accuracy

These verification techniques enable operators to confirm the accuracy of micrometer measurements and ensure that the results are reliable and trustworthy.

Best Practices for Verification

To ensure accurate micrometer measurements, follow these best practices for verification:

- Use a precision gauge to measure a known dimension

- Check the measurement against a calibration standard

- Verify the measurement against a reference point, such as a known dimension or a precision gauge

- Repeat the measurement multiple times to ensure consistency and accuracy

- Document all measurements and verification results to ensure auditability and traceability

By following these best practices, micrometer operators can ensure accurate and reliable measurements, which are critical in many industries, such as precision engineering, scientific research, and quality control.

Precision and Accuracy in Micrometer Readings

Precision and accuracy are crucial factors in micrometer readings, as they directly impact the reliability and trustworthiness of the measurements. The precision of a micrometer refers to its ability to consistently produce measurements that are close to the true value, while accuracy refers to how close the measurement is to the actual value. In other words, precision indicates the reliability of the measurement, whereas accuracy indicates how close the measurement is to the true value.

Key Factors Influencing Precision and Accuracy

There are several key factors that influence the precision and accuracy of micrometer readings. These include:

The quality of the micrometer: A high-quality micrometer with precise mechanical components and a well-made calibration system is essential for accurate measurements.

Calibration: Regular calibration of the micrometer is crucial to ensure that it remains accurate and precise over time.

Environmental factors: Environmental factors such as temperature, humidity, and vibration can affect the accuracy and precision of micrometer readings.

Operator skills: The skills and experience of the operator can significantly impact the accuracy and precision of micrometer readings.

Consequences of Measurement Errors

Measurement errors can have severe consequences in a variety of applications, including engineering, manufacturing, and quality control. Some of the consequences of measurement errors include:

Inaccurate product specifications: Measurement errors can lead to inaccurate product specifications, which can result in defective products and reduced customer satisfaction.

Increased costs: Measurement errors can lead to increased costs due to rework, scrap, and wasted materials.

Reduced productivity: Measurement errors can reduce productivity and efficiency in manufacturing processes.

Safety risks: Measurement errors can lead to safety risks in applications such as construction, aerospace, and automotive.

Choosing the Right Micrometer for Specific Applications

Choosing the right micrometer for specific applications is critical to ensure accurate and precise measurements. The following factors should be considered when selecting a micrometer:

Accuracy and precision: The micrometer should have high accuracy and precision to ensure reliable measurements.

Measuring range: The micrometer should have a measuring range that is sufficient for the application.

Dial indicator or digital display: The micrometer should have a dial indicator or digital display that is easy to read and understand.

Material: The micrometer should be made from high-quality materials that can withstand the rigors of repeated use.

Maintenance of Micrometers

Regular maintenance of micrometers is essential to ensure that they remain accurate and precise over time. Some of the key maintenance tasks include:

Calibration: Micrometers should be calibrated regularly to ensure that they remain accurate and precise.

Cleaning: Micrometers should be cleaned regularly to prevent contamination and corrosion.

Storage: Micrometers should be stored in a dry, secure location to prevent damage and tampering.

Inspection: Micrometers should be inspected regularly for signs of wear and tear.

Tips for Ensuring Precision and Accuracy

To ensure precision and accuracy in micrometer readings, the following tips can be followed:

Use high-quality micrometers that are calibrated regularly.

Use the correct measuring procedure to ensure accurate readings.

Minimize environmental factors that can affect the accuracy and precision of micrometer readings.

Use a stable and secure base for the micrometer to prevent vibration and movement.

Take multiple readings and average them to ensure accurate results.

Use a calibrator to verify the accuracy and precision of the micrometer.

Keep records of the micrometer’s performance and maintenance.

Common Micrometer Calibration Errors, How to read micrometers

Common micrometer calibration errors include:

Incorrect zero points: The zero points of the micrometer may be incorrect, leading to inaccurate measurements.

Incorrect measuring range: The measuring range of the micrometer may be incorrect, leading to inaccurate measurements.

Incorrect calibration procedure: The calibration procedure may be incorrect, leading to inaccurate or incomplete calibration.

Calibration Checks

Calibration checks can be performed to verify the accuracy and precision of micrometers. Some of the common calibration checks include:

Checking the micrometer’s zero points to ensure that they are correct.

Checking the micrometer’s measuring range to ensure that it is correct.

Verifying that the micrometer’s calibration is complete and accurate.

Epilogue: How To Read Micrometers

Reading a micrometer requires attention to detail and practice to master. By following the steps Artikeld in this article and understanding the importance of precision, you will be well-equipped to tackle various tasks that involve measuring small dimensions. Remember to maintain your micrometer regularly and stay up-to-date with the latest techniques to ensure accurate readings and efficient work processes.

FAQ Resource

What is the primary purpose of a micrometer?

A micrometer is designed to measure small dimensions or distances with high accuracy, making it a crucial tool in various industries.

Can micrometers be used for measuring both internal and external dimensions?

Yes, micrometers can be used for measuring both internal (e.g., internal diameter) and external (e.g., external diameter) dimensions, depending on the type of micrometer and the application.

What are some common applications of micrometers?

Micrometers are commonly used in industries such as engineering, manufacturing, quality control, and research and development for various applications, including inspection, verification, and measurement of components and parts.

Can micrometers be damaged or compromised if not properly maintained?

Yes, micrometers require regular maintenance to ensure accurate measurements and prevent damage. Failure to maintain your micrometer can result in inaccurate readings, compromised quality, or even equipment failure.