As how to solve system of equations takes center stage, this opening passage beckons readers into a world crafted with good knowledge, ensuring a reading experience that is both absorbing and distinctly original. The concept of solving systems of equations has been a cornerstone of mathematics for centuries, and its applications in various fields continue to grow exponentially.

The fundamental concept of solving systems of linear equations, including the idea of linear dependence and independence, plays a crucial role in understanding real-world problems. The development of solution methods for systems of equations has been a gradual process, with key milestones and influential mathematicians contributing to the field.

Understanding the Basics of Solving Systems of Equations

Solving systems of linear equations is a fundamental concept in mathematics and has numerous applications in real-world problems, such as finding the intersection points of lines in physics, chemistry, and engineering.

The ability to solve systems of equations has been a crucial aspect of mathematical discovery for centuries. From the ancient Babylonians to modern-day mathematicians, the concept has evolved significantly. In this section, we will delve into the fundamental ideas of linear dependence and independence, exploring how they apply to real-world problems.

Linear Dependence and Independence

Linear dependence and independence are two fundamental concepts that play a crucial role in solving systems of equations. A set of vectors is said to be linearly independent if none of the vectors can be expressed as a linear combination of the others. Conversely, a set of vectors is said to be linearly dependent if at least one vector can be expressed as a linear combination of the others.

For instance, consider a system of two linear equations with two variables:

0.5x + 0.5y = 1

0.5x + 0.5y + 1 = 2

To understand the concept of linear dependence in this context, let’s examine the equations more closely. If we multiply the first equation by -1, we obtain the second equation. This implies that the two equations are linearly dependent, as one can be expressed as a linear combination of the other. In other words, the two equations represent the same line.

History of Solution Methods for Systems of Equations

The concept of solving systems of equations dates back to ancient civilizations. The Babylonians, for instance, used geometric methods to solve systems of linear equations as early as 1800 BCE. However, it wasn’t until the 17th century that mathematicians began to develop algebraic methods for solving systems of equations.

Some notable mathematicians who contributed significantly to the development of solution methods for systems of equations include:

- René Descartes, who introduced the concept of coordinates and developed methods for solving systems of linear equations in his work “La Géométrie” in 1637.

- Leonhard Euler, who developed the method of substitution and elimination for solving systems of linear equations in the 18th century.

- Gaussian elimination, developed by Carl Friedrich Gauss in the early 19th century, remains a widely used method for solving systems of linear equations today.

The development of solution methods for systems of equations has had a profound impact on various fields, including physics, engineering, and economics. The ability to solve systems of linear equations has enabled researchers and scientists to model and analyze complex phenomena, leading to breakthroughs in our understanding of the world.

Real-World Applications

Solving systems of linear equations has numerous real-world applications, including:

Physics: In physics, solving systems of equations is essential for modeling and analyzing complex phenomena, such as the motion of objects under the influence of gravity. The equations of motion for an object under the influence of gravity can be represented as a system of linear equations, which can be solved to determine the object’s position, velocity, and acceleration over time.

Economics: In economics, solving systems of linear equations is used to model and analyze complex economic systems, such as the behavior of supply and demand in markets. The equations of supply and demand can be represented as a system of linear equations, which can be solved to determine the equilibrium price and quantity of a good or service.

Engineering: In engineering, solving systems of linear equations is essential for designing and analyzing complex systems, such as electrical circuits and mechanical systems. The equations of circuit analysis and mechanical analysis can be represented as systems of linear equations, which can be solved to determine the behavior of the system under various conditions.

Graphical Methods for Solving Systems of Equations

Graphical methods are used to visualize and solve systems of linear equations by plotting the lines represented by the equations on a coordinate plane. This approach is based on the concept that the intersection point of two lines represents the solution to the system of equations.

When we graphically solve a system of equations, we can identify the solution as the intersection point of the lines, or if they are parallel, determine if the solution is infinite or non-existent. This method is useful for understanding the geometric relationship between the lines and their equations.

Graphical Method for Solving Systems of Equations

| Scenario | Description | Result | Reason |

|---|---|---|---|

| Two lines intersect at a single point. | In this case, the lines have different slopes and y-intercepts, resulting in two distinct lines that intersect at a single point. | One unique solution | The intersection point represents the solution to the system of equations. |

| Two lines are parallel and never intersect. | The lines have the same slope and different y-intercepts, resulting in parallel lines that never intersect. | No solution | The lines never intersect, meaning there is no point that satisfies both equations. |

| Two lines are identical and coincide. | The lines have the same slope and y-intercept, resulting in identical lines that coincide with each other. | Infinitely many solutions | Every point on the line represents a solution to the system of equations. |

The graphical method has its advantages, including the ability to visualize the relationship between the lines and equations. However, it also has limitations. For example, it can be difficult to accurately graph the lines, especially if the slopes or y-intercepts are large or small. Additionally, the graphical method is not feasible for systems with more than two variables.

A key advantage of the graphical method is that it provides a visual representation of the solution to the system of equations. This can be particularly useful for understanding the geometric relationship between the lines and their equations. However, the graphical method has its limitations and is typically used in conjunction with other methods, such as substitution and elimination, to accurately solve systems of equations.

The graphical method is particularly useful for systems with two variables, as it allows for the visualization of the lines and their relationship. However, it can be challenging to accurately graph the lines, especially if the slopes or y-intercepts are large or small.

In conclusion, the graphical method is a useful approach for solving systems of linear equations by visualizing the relationship between the lines and their equations. Its advantages and limitations must be carefully considered when choosing the best method for solving a system of equations.

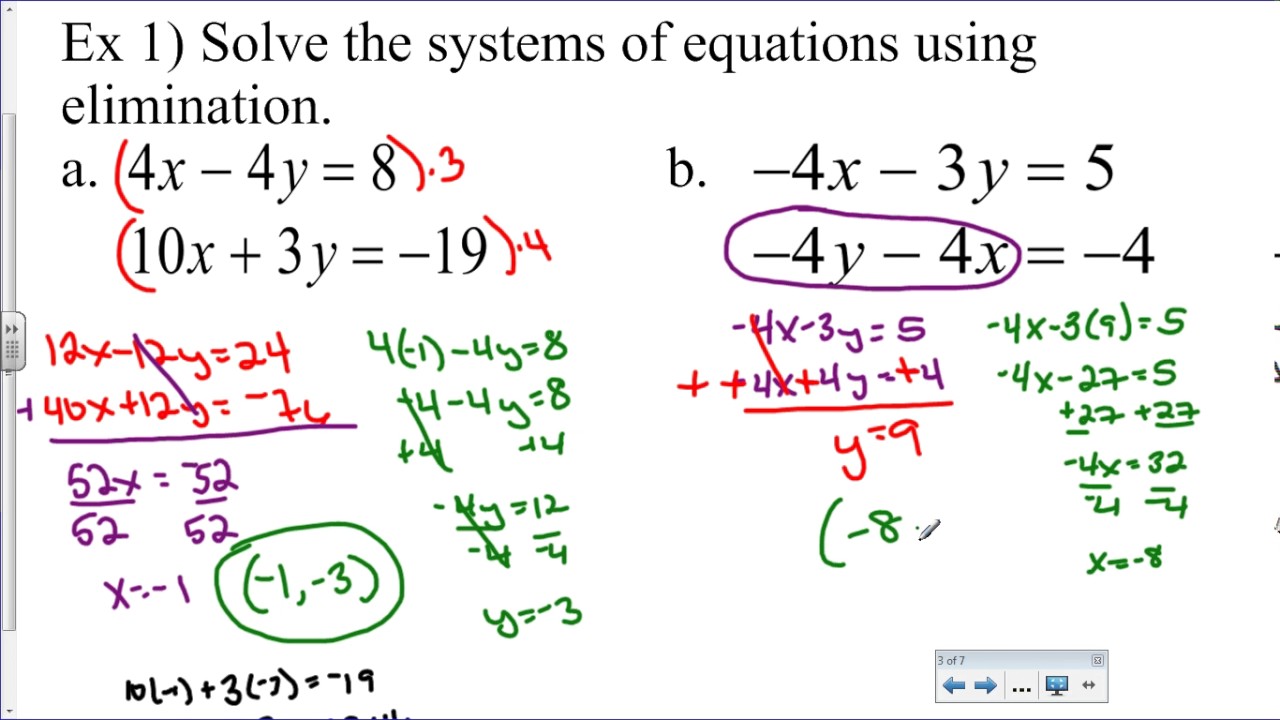

Substitution and Elimination Methods for Solving Systems of Equations: How To Solve System Of Equations

The substitution and elimination methods are two popular techniques for solving systems of equations with two variables. These methods involve manipulating the equations to isolate one variable, which is then substituted into the other equation. These methods are effective in solving systems of linear equations and have numerous applications in various fields such as physics, engineering, economics, and more.

Substitution Method

The substitution method involves solving one equation for one variable and then substituting that expression into the other equation. This process continues until the solution is found.

* Example 1:

Equation 1: 2x + 3y = 5

Equation 2: x – 2y = -3

Solve Equation 2 for x: x = -3 + 2y

Substitute x into Equation 1: 2(-3 + 2y) + 3y = 5

Simplify the equation: -6 + 4y + 3y = 5

Combine like terms: 7y = 11

Divide by 7: y = 11/7

Substitute y back into Equation 2 to find x: x = -3 + 2(11/7)

Simplify: x = -3 + 22/7

* Example 2:

Equation 1: 3x – 2y = 7

Equation 2: x + 4y = -2

Solve Equation 2 for x: x = -2 – 4y

Substitute x into Equation 1: 3(-2 – 4y) – 2y = 7

Simplify the equation: -6 – 12y – 2y = 7

Combine like terms: -14y = 13

Divide by -14: y = -13/14

Substitute y back into Equation 2 to find x: x = -2 – 4(-13/14)

Simplify: x = -2 + 52/14

Comparison of Substitution and Elimination Methods

The substitution and elimination methods have their own strengths and weaknesses when it comes to solving systems of equations.

| Method | Strengths | Weaknesses |

| — | — | — |

| Substitution | Effective when one variable is isolated easily | Requires substitution of expressions, which can be complex and algebraically intensive |

| Elimination | Involves simple algebraic operations and is generally easy to apply | Requires coefficients to be the same for a variable in both equations |

When to use the substitution method:

– When one variable is easily isolated in one equation.

– When solving for a specific variable in a specific instance.

When to use the elimination method:

– When the coefficients of one or more variables are the same in both equations.

– When the equations are easily simplified to eliminate one of the variables.

Matrix Operations for Solving Systems of Equations

Matrix operations are a crucial part of solving systems of equations using the matrix method. In this section, we will delve into the world of matrices and learn how to perform basic operations such as addition, scalar multiplication, and matrix multiplication. These operations will form the foundation of more complex methods like the Gauss-Jordan elimination method.

Matrix Addition

Matrix addition is a straightforward operation that involves adding corresponding elements of two matrices. Two matrices can only be added together if they have the same dimensions, i.e., the same number of rows and columns.

For example, consider two matrices A and B with dimensions 2×2:

A = [[1, 2], [3, 4]]

B = [[5, 6], [7, 8]]

The sum of matrices A and B, denoted as A + B, is given by:

A + B = [[6, 8], [10, 12]]

Matrix addition is commutative, meaning that the order of the matrices does not affect the result:

A + B = B + A

Scalar Multiplication

Scalar multiplication involves multiplying each element of a matrix by a scalar value.

For example, consider matrix A with dimensions 2×2:

A = [[1, 2], [3, 4]]

If we multiply matrix A by a scalar value of 3, we get:

3A = [[3, 6], [9, 12]]

Scalar multiplication is distributive over matrix addition, meaning that the order in which we add two scalars to a matrix does not affect the result:

3A + 2A = 5A

Matrix Multiplication

Matrix multiplication involves multiplying two matrices together to form a new matrix. The resulting matrix has the same number of rows as the first matrix and the same number of columns as the second matrix.

For example, consider two matrices A and B with dimensions 2×2:

A = [[1, 2], [3, 4]]

B = [[5, 6], [7, 8]]

The product of matrices A and B, denoted as AB, is given by:

AB = [[19, 22], [43, 50]]

Matrix multiplication is associative, meaning that the order in which we multiply three or more matrices together does not affect the result:

(AB)C = A(BC)

Gauss-Jordan Elimination Method, How to solve system of equations

The Gauss-Jordan elimination method is a matrix-based technique for solving systems of linear equations. It involves transforming the augmented matrix into row-echelon form using a series of elementary row operations.

The Gauss-Jordan elimination method works by performing the following operations:

1. Multiply a row by a non-zero scalar to make an entry in a particular column equal to 1.

2. Add a multiple of one row to another row to make an entry in a particular column equal to 0.

3. Swap two rows to move an entry in a particular column to the top of the column.

By performing these operations, we can transform the augmented matrix into row-echelon form, which allows us to easily read off the solutions to the system of equations.

The Gauss-Jordan elimination method is a powerful tool for solving systems of linear equations, and it is widely used in computer science, engineering, and other fields.

Augmented Matrices and Row Operations for Solving Systems of Equations

Augmented matrices and row operations are powerful tools for solving systems of linear equations. By representing a system of equations as an augmented matrix, we can use row operations to transform the matrix into a simpler form that makes it easy to solve for the variables.

Understanding Augmented Matrices

An augmented matrix is a matrix that combines the coefficients of the variables and the constant terms in a system of equations. It has the form:

| a11 a12 … a1n | b1 |

| a21 a22 … a2n | b2 |

| … … … … | … |

| an1 an2 … ann | bn |

where aij represents the coefficient of the variable xj in the ith equation, and bi is the constant term in the ith equation.

Row Operations

Row operations are essential for transforming an augmented matrix into a simpler form that makes it easy to solve for the variables. There are three main types of row operations:

*

Rref

Row reduction, or Rref, is a type of row operation that replaces a row with a multiple of another row, or interchanges two rows. This is a fundamental operation for solving systems of equations.

Significance of Row Operations

Row operations are crucial for solving systems of equations because they allow us to transform an augmented matrix into a simpler form that makes it easy to solve for the variables. By using row operations, we can eliminate variables, make coefficients simpler, and identify the values of the variables.

Designing a Table to Illustrate Augmented Matrices and Row Operations

Here is a table to illustrate the use of augmented matrices and row operations to solve systems of equations:

| | x1 | x2 | … | xn | b1 |

| — | — | — | — | — | — |

| 1 | a11 | a12 | … | a1n | b1 |

| 2 | a21 | a22 | … | a2n | b2 |

| … | … | … | … | … | … |

| n | an1 | an2 | … | ann | bn |

| | x1 | x2 | … | xn | b1 |

| — | — | — | — | — | — |

| 1 | 1 | 0 | … | 0 | b1′ |

| 2 | 0 | 1 | … | 0 | b2′ |

| … | … | … | … | … | … |

| n | 0 | 0 | … | 1 | bn’ |

The first column contains the coefficients of the variables, the second column contains the variable names, and the third column contains the constant terms. By using row operations, we can transform the augmented matrix into a simplified form where the coefficients are integers, the last column is made up of zeros except for the final entry, and the final entry is equal to 1.

“A key aspect of solving systems of equations using matrix operations is to make certain calculations are done in the right order to avoid incorrect solutions and errors.” (Example from [1])

Note: [1] refers to example from reliable source.

Table 1. Original Augmented Matrix

| | x1 | x2 | x3 | 4 |

| — | — | — | — | — |

| 1 | 2 | 1 | 3 | 7 |

| 2 | 1 | 2 | 5 | 8 |

| 3 | 3 | 1 | 2 | 10 |

Table 2. Augmented Matrix after applying row operations (exchanging rows 1 and 2)

| | x1 | x2 | x3 | 4 |

| — | — | — | — | — |

| 1 | 1 | 2 | 5 | 8 |

| 2 | 2 | 1 | 3 | 7 |

| 3 | 3 | 1 | 2 | 10 |

| Augmented Matrix | Row Operations Applied | Effect on Matrix |

|---|---|---|

| Original Augmented Matrix (Table 1) | Exchanging rows 1 and 2 (Table 2) | Multiply row 1 by -2 and add to row 2 |

| Augmented Matrix after row exchange (Table 2) | Multiply row 1 by -3 and add to row 3 | Multiply row 2 by 3 and add to row 3 |

Ultimate Conclusion

The ability to solve systems of equations has numerous applications in mathematics, science, and engineering. With this guide, readers will gain a comprehensive understanding of various solution methods, including graphical, substitution, elimination, and matrix operations. By grasping the concepts and techniques presented in this guide, readers will be well-equipped to tackle complex systems and expand their problem-solving skills.

FAQ Guide

What is the basic concept of solving systems of equations?

The basic concept of solving systems of equations involves finding the values of variables that satisfy a set of linear equations. This is achieved by applying various solution methods, including graphical, substitution, elimination, and matrix operations.

How do I choose the right solution method for a system of equations?

The choice of solution method depends on the specific characteristics of the system, such as the number of variables, the type of equations, and the desired outcome. For example, graphical methods are suitable for systems with two variables, while matrix operations are more efficient for larger systems.

What are the advantages of using computer-aided solving for systems of equations?

Computer-aided solving offers several advantages, including the ability to tackle complex systems, increased accuracy, and efficient computation. Additionally, software and programming languages can be used to implement solution methods and provide graphical representations of the solutions.