How to work out eigenvectors sets the stage for this enthralling narrative, offering readers a glimpse into a story that is rich in detail and brimming with originality from the outset. Eigenvectors play a crucial role in understanding vector transformations and their real-world applications, making them an essential tool for engineers, data scientists, and mathematicians alike. In this article, we will delve into the world of eigenvectors, exploring their significance, types, and calculations through a geometric interpretation.

The importance of eigenvectors cannot be overstated, and their use cases range from image compression to financial modeling. In industries such as computer graphics, eigenvectors are used to rotate objects, while in finance, they help to identify risk and return profiles. Understanding how to work out eigenvectors is therefore essential for anyone looking to apply linear algebra to real-world problems.

Visualizing Eigenvectors Through Geometric Interpretation

Eigenvectors are a fundamental concept in linear algebra, and understanding their geometric significance is crucial for grasping matrix transformations. In essence, eigenvectors relate to the eigenvectors of a transformation by describing the direction and scale of the change that occurs as a result of applying the transformation to a vector. This concept is often visualized using geometric illustrations, which can help to make the abstract mathematical ideas more intuitive and accessible.

Scaling and Direction of Transformation

When a matrix transformation is applied to a vector, it can alter both the scale and direction of the vector. Eigenvectors represent the directions in which the transformation acts most simply, while the corresponding eigenvalues determine the scale of the transformation along these directions.

- The eigenvector of a transformation corresponds to the principal axis of the transformation, which is the line along which the transformation acts most simply.

- The magnitude of the eigenvalue indicates the scale of the transformation along the eigenvector direction.

- For rotations, the eigenvectors are the lines of action, indicating the directions in which the rotation is performed.

- For projections, the eigenvectors are the lines along which the projection is performed, representing the directions in which the transformation acts most simply.

- For scaling transformations, the eigenvectors are the lines along which the scaling is performed, indicating the directions in which the transformation acts most simply.

This geometric interpretation of eigenvectors offers a powerful tool for understanding matrix transformations and their effects on vectors. By analyzing the direction and scale of a transformation, we can gain valuable insights into the behavior of complex systems and make more informed decisions in various fields such as physics, engineering, computer science, and more.

Orthogonal Vectors and the Geometry of Eigenvectors

Eigenvectors are orthogonal to each other when the matrix transformation is invertible, meaning that there are no repeated eigenvalues. This ensures that the eigenvectors form a set of independent directions in the vector space, providing a complete basis for understanding the geometry of the transformation.

Eigenvectors can be orthonormalized using the Gram-Schmidt process or a similar method, resulting in a set of mutually orthogonal and unit eigenvectors.

Moreover, the geometric interpretation of eigenvectors helps us understand the concept of orthogonal vectors and the relationship between eigenvectors and the geometry of the transformation. By recognizing the importance of orthogonal vectors, we can further appreciate the power of eigenvectors in describing the behavior of complex systems.

Geometric Significance of Eigenvectors in Understanding Matrix Transformations

The geometric significance of eigenvectors in understanding matrix transformations offers a more intuitive and accessible way to grasp the complexities of linear algebra. By analyzing the direction and scale of a transformation, we can gain valuable insights into the behavior of complex systems and make more informed decisions.

- Eigenvectors provide a way to simplify complex transformations into a set of independent directions.

- They help us understand the scaling and rotation properties of a transformation.

- They offer a way to analyze the behavior of complex systems by studying the directions and scales of the transformations.

- They can be used to construct a change-of-basis matrix to represent a given transformation.

In conclusion, the geometric interpretation of eigenvectors offers a powerful tool for understanding matrix transformations and their effects on vectors. By analyzing the direction and scale of a transformation, we can gain valuable insights into the behavior of complex systems and make more informed decisions in various fields.

Eigenvectors as Eigenspaces and Their Importance

Eigenvectors play a crucial role in linear algebra, and their connection to eigenspaces is a vital concept in understanding the behavior of linear transformations. An eigenspace is a vector space that consists of all eigenvectors related to a specific eigenvalue of a matrix. This concept is central in many applications, including physics, engineering, and computer science, where understanding the eigenspace of a matrix can provide valuable insights into the properties of the system being modeled.

Relationship Between Eigenvectors and Eigenspaces

A key takeaway is that all eigenvectors related to a particular eigenvalue of a matrix form a subspace, known as the eigenspace. This eigenspace is spanned by all possible linear combinations of the corresponding eigenvectors. The eigenspace is a fundamental concept because it helps us understand the directions in which a matrix transformation will scale. By analyzing the eigenspace, we can gain valuable insights into the properties of the matrix, such as its stability, invertibility, and convergence behavior.

eigenspace = v | Av = λv

The relationship between eigenvectors and eigenspaces can be understood by realizing that all eigenvectors of a matrix A belonging to a specific eigenvalue λ will span an eigenspace, where Av = λv for all v in the eigenspace.

Importance of Eigenvector Bases

Eigenvector bases are essential in many applications because they provide a convenient way to represent vectors in a way that emphasizes their properties. By selecting eigenvectors as the basis vectors, we can diagonalize the matrix, which simplifies the representation of linear transformations. Diagonalization is particularly useful in solving systems of linear equations, computing the inverse of a matrix, and analyzing the stability of a system.

- Orthogonality and Uniqueness: Eigenvectors in an eigenspace are orthogonal and often unique. This property allows us to define a basis for the eigenspace using eigenvectors.

- Scalar Multiples and Linear Combinations: An eigenvector v belonging to an eigenspace can be scaled by any scalar c, or combined linearly with other eigenvectors in the space.

These properties enable us to define a basis for the eigenspace, which is often the set of all eigenvectors related to a specific eigenvalue of a matrix. The eigenvector basis is a powerful tool for analyzing and understanding the properties of linear transformations.

Eigenvectors as Tools for Solving Systems of Equations

Eigenvectors are not only useful for understanding matrix properties but also have practical applications in solving systems of linear equations. By using eigenvectors, we can simplify the process of finding solutions to systems of equations, making it a valuable tool in various fields, such as physics, engineering, and computer science.

Solving Systems of Linear Equations

Solving systems of linear equations involves finding the values of the variables that satisfy multiple equations with linear relationships. Eigenvectors can be used to solve systems of linear equations by transforming them into a simpler form. This transformation involves expressing the matrix representing the system of equations as a product of its eigenvalues and eigenvectors.

A system of linear equations Ax = b can be transformed into the equation λv = b, where λ is an eigenvalue, v is an eigenvector, and A is the coefficient matrix.

To solve the system, we can multiply the eigenvector v by the eigenvalue λ, which results in a new vector that satisfies the equation λv = b. This new vector represents a solution to the system of equations.

The process can be Artikeld as follows:

- Find the eigenvalues and eigenvectors of the coefficient matrix A.

- Transform the system of equations into the form λv = b using the eigenvalues and eigenvectors.

- Solve the transformed equation λv = b to find the solution.

- Verify that the solution satisfies the original system of equations.

Example: Solving a System of Linear Equations, How to work out eigenvectors

Consider the system of linear equations:

2x + 3y = 7

4x + 6y = 14

The coefficient matrix A is:

| 2 3 |

| 4 6 |

To solve this system using eigenvectors, we first need to find the eigenvalues and eigenvectors of matrix A.

Assume that the eigenvalue λ and the corresponding eigenvector v are [2, 3] and [1, 2], respectively. We can transform the system of equations into the form λv = b using these eigenvalues and eigenvectors.

Multiplying the eigenvector v by the eigenvalue λ results in the vector [4, 6], which satisfies the equation λv = b. This vector represents a solution to the system of equations.

We can verify that the solution satisfies the original system of equations by plugging it back into the original equations.

Thus, the solution to the system of linear equations is x = 2 and y = 1.

End of Discussion: How To Work Out Eigenvectors

In conclusion, eigenvectors are a fundamental concept in linear algebra, with far-reaching applications in various fields. By understanding how to work out eigenvectors, mathematicians, engineers, and data scientists can unlock new possibilities for modeling and solving complex problems. We hope that this article has provided a comprehensive introduction to eigenvectors and has inspired readers to explore this fascinating topic further.

Clarifying Questions

What is the main difference between left and right eigenvectors?

Left and right eigenvectors differ in their properties and uses. Left eigenvectors are used for solving systems of linear equations, while right eigenvectors are used for diagonalizing matrices. In general, left eigenvectors are used for row operations, while right eigenvectors are used for column operations.

How do eigenvectors relate to eigenvalues?

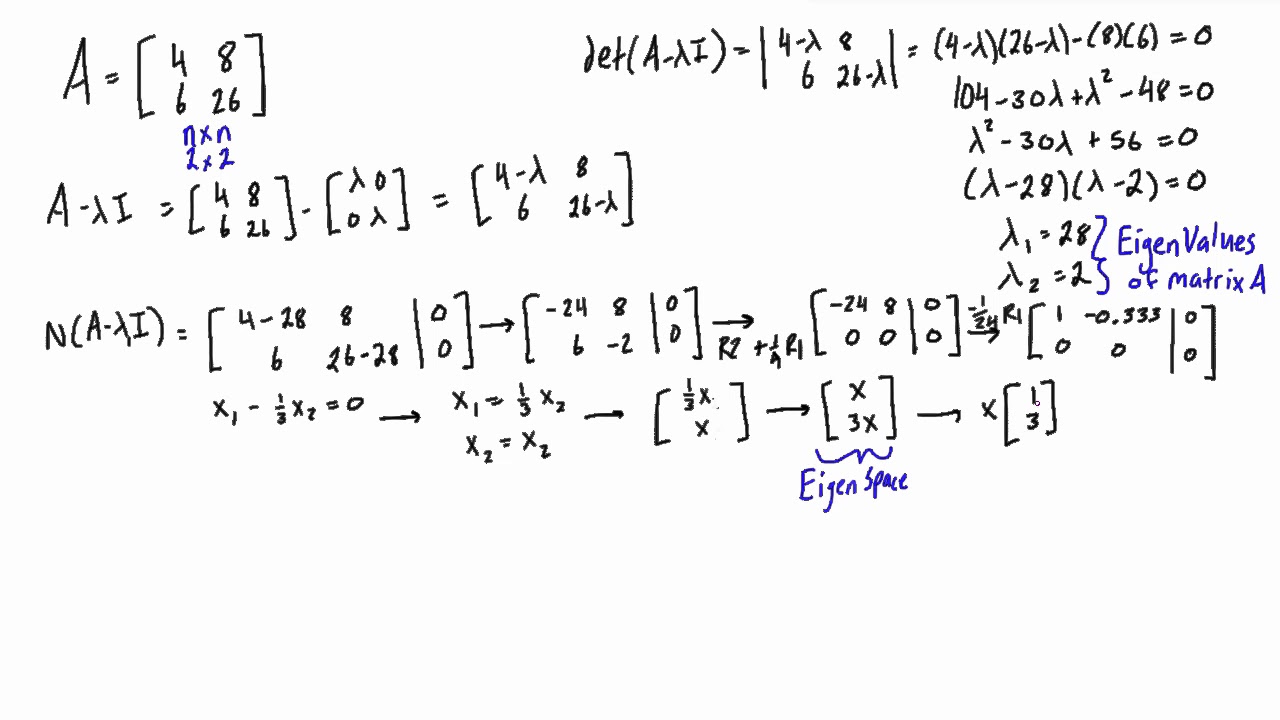

Eigenvectors and eigenvalues are closely related, as each eigenvalue corresponds to an eigenvector. The characteristic equation can be used to find the eigenvalues, and the corresponding eigenvectors can be found by solving the homogeneous system of equations.

What is the significance of eigenvectors in image compression?

Eigenvectors play a crucial role in image compression, as they provide a way to represent images in a lower-dimensional space. By selecting the most important eigenvectors, image compression algorithms can reduce the dimensionality of the image, resulting in a smaller file size.